Core problem: many manufacturers use AI without understanding whether their data can improve someone else's model or leave the intended control boundary

Main promise: buyers should treat training policy and deployment architecture as core buying criteria, not legal footnotes

Most AI buying conversations start with capability. In manufacturing, they should start with exposure.

The question is not only whether the tool answers well in a demo. The question is what happens to the information once operators, engineers, or analysts begin feeding it real factory context: constraints, incidents, costs, improvement logic, and the half-formed notes that only make sense inside your plant. If that material enters a model workflow without clear separation rules, the company may be creating value for a system it does not control—and weakening its own position every time someone pastes another paragraph into the box.

Why this question matters more than most buyers think

Manufacturing prompts are rarely harmless. They often carry process assumptions, cost structure, line constraints, supplier data, and the narrative of how problems were solved last quarter. Even when the user believes they have redacted enough, the remaining context can still be operationally specific. Training policy is where that exposure becomes structural: not a one-time leak, but a standing question about whether your operational language can be absorbed into a shared improvement loop that serves other customers, other products, or future model behavior you did not approve.

Training policy is not a small detail

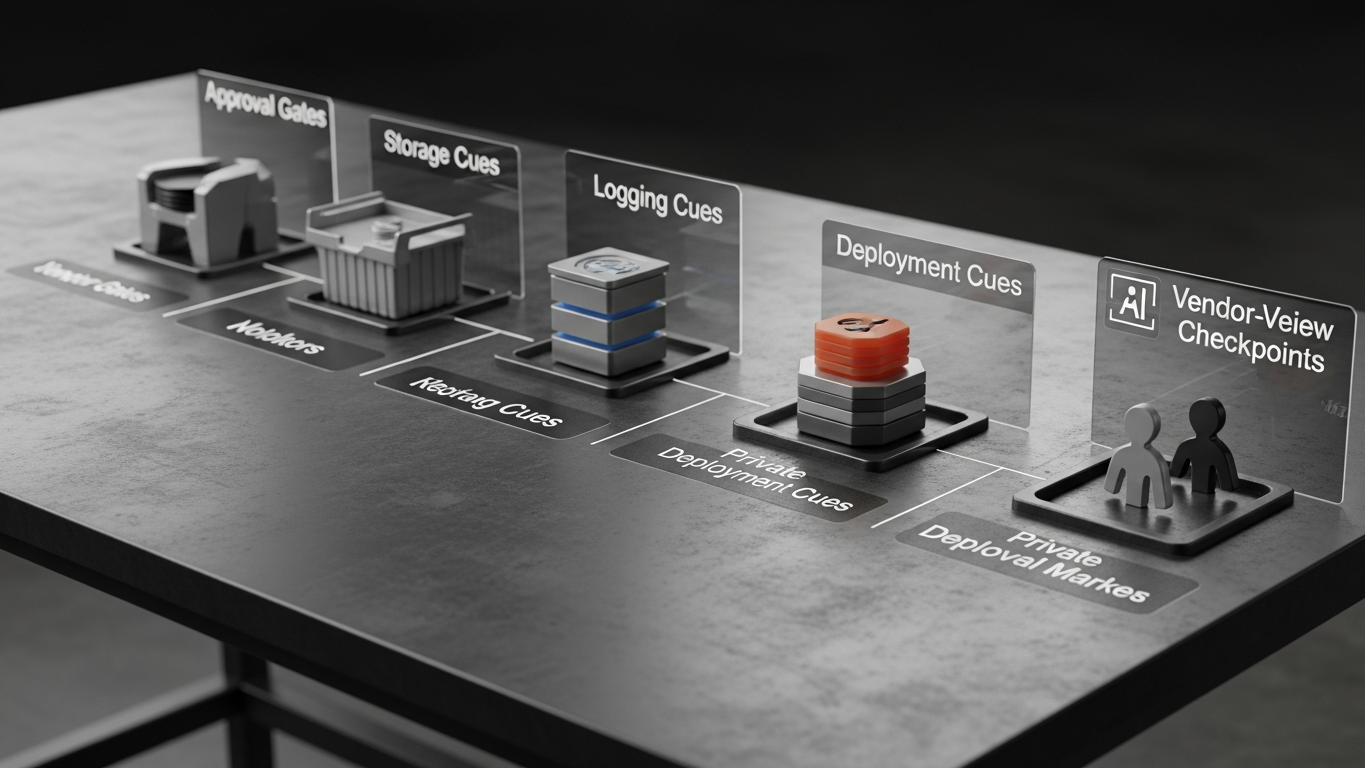

Many buyers still assume that if a vendor says “private” or “secure,” the problem is solved. It is not. The buyer needs to know whether client data is ever used to train or fine-tune the model; whether prompt content is stored; who can access logs; whether data can be retained outside the intended environment; and whether subprocessors are involved in processing. If the answer is vague, the risk is real—because vague defaults tend to favor the platform, not the plant.

The industrial risk is strategic, not only technical

If company know-how helps improve a model that serves other parties, the issue is not only confidentiality. It is strategic leakage. The organization may be giving away patterns about how it operates, optimizes, estimates, or responds to problems—patterns that are hard to “take back” once they have been folded into a vendor’s improvement cycle. That is a different category of loss than a single misplaced file.

Why legal language is not enough

Industrial teams often rely on procurement language or generic security claims. That is too weak for AI. A model relationship includes training behavior, inference boundaries, storage behavior, and governance and auditability. Each of those layers affects whether you retain meaningful control. A contract paragraph without a technical story is like a quality plan without a control plan: it reads well until someone asks how it is enforced.

What manufacturers should ask directly

Before approving an AI vendor, ask direct questions in plain business language. Does client data ever train the model? Are prompts, documents, or outputs stored beyond the session? Can the model run in a private or on-prem environment aligned to your segmentation? Who can inspect interaction history under what rules? How is access logged and governed?

If the answers cannot be stated clearly without a chain of follow-up calls, the buying risk is already too high for sensitive industrial workloads.

Minimum bar: training defaults are explicit; retention is explicit; subprocessors are named where they touch payloads; deployment mode is chosen before pilot data flows.

A serious industrial AI provider should make three things explicit: your data does not train someone else’s model; deployment boundaries are controlled; human approval remains in the loop for important decisions. That is the difference between AI convenience and AI responsibility.

DBR77 Vector is positioned for industrial environments where buyers need stronger certainty around no training on client data, private deployment options, industrial reasoning, and stronger governance expectations. That shifts the buying question from “what can the model do?” to “what control do we keep while using it?”

If your team cannot answer whether your data trains someone else’s model, you do not yet understand your AI exposure. Manufacturers should never treat that as a secondary question.

DBR77 Vector helps manufacturers use industrial AI without training the model on client data and with stronger deployment control. Review security or Book a demo.