Core problem: business teams often see security objections as friction, while security teams often see real exposure that has not been designed out of the AI initiative

Main promise: manufacturers should treat security resistance as a signal to improve deployment fit, governance, and data handling rather than as an obstacle to bypass

Many AI projects stall when security gets involved. Business teams often read that as resistance to progress. Sometimes it is. But in manufacturing, security teams are often right more often than the rest of the organization wants to admit—because they are trained to see the parts of the system that demos hide: data paths, retention, access, subprocessors, and what happens when something goes wrong at two in the morning.

Security teams usually push back when deployment boundaries are unclear, data retention rules are vague, access control is weak, subprocessors are unknown, or auditability is thin. These are not small details in industrial environments. They shape whether AI can be trusted around sensitive operational knowledge—and whether the organization can explain its choices under review.

Why business teams misread the situation

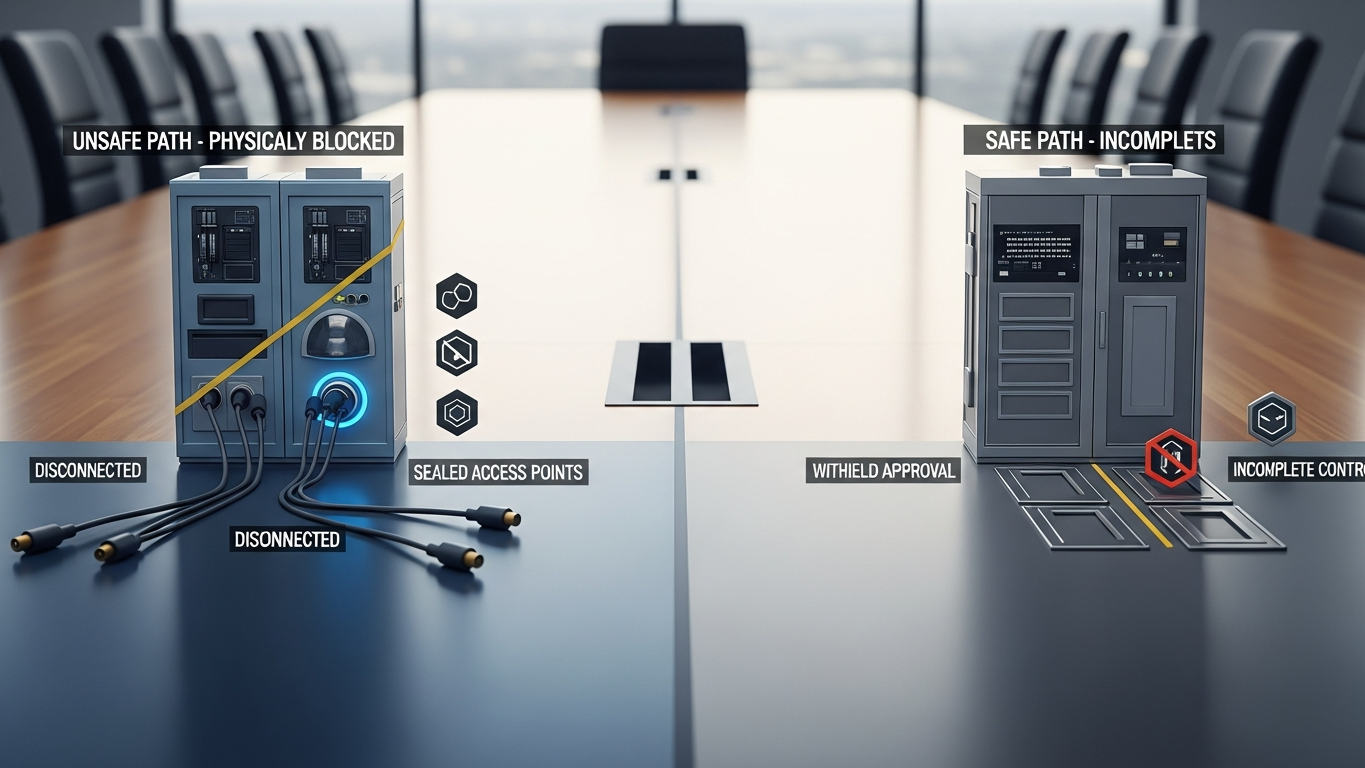

When teams see clear upside in AI, they often treat security questions like delays. That is a mistake. A blocked project may not mean the initiative is bad. It may mean the operating model is incomplete: the wrong deployment mode was chosen, governance was too thin, data sensitivity was underestimated, or convenience was prioritized over control. In that frame, security is not the enemy of value. Security is the early warning system for a program that will not survive scale.

When security is clearly right

Security is usually right to slow or stop AI when deployment boundaries are unclear, client data may train the model, sensitive files can move outside intended control, no strong review or approval model exists, or auditability is weak. In those cases, the project is not ready for serious industrial use—regardless of how exciting the prototype looks.

The real problem is often design, not security

Many AI teams try to solve objections late. By that point, security looks like the blocker. In reality, the issue often started earlier, when architecture and data class were treated as “details we can sort out after the pilot.” Late fixes are expensive. They also train the organization to treat governance as paperwork instead of product design.

Better AI projects include security logic from the start

Manufacturers should bring security thinking into AI design early through deployment choices, training-policy clarity, access controls, traceability, and human approval. That changes security from a gatekeeper role into part of responsible adoption—and it usually accelerates the program over time, because the first “real” use cases do not die in review limbo.

DBR77 Vector is positioned for industrial AI environments where security concerns are not side issues: private deployment options, no training on client data, industrial reasoning, and stronger governance expectations. That makes security easier to integrate into the buying logic from day one.

Security teams do block AI projects. In manufacturing, they are often right when the deployment model, data policy, and governance standard are not strong enough yet. The answer is not to bypass security. It is to build a better AI operating model—one that produces evidence, not promises.

Plant checkpoint

Treat “Why Security Teams Block AI Projects - And When They're Right” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector helps manufacturers address legitimate security objections through private deployment, stronger data policy, and governance-ready AI design. Review security or Review deployment options.