Core problem: many industrial teams underestimate how dangerous generic public AI can be when used with sensitive operational data

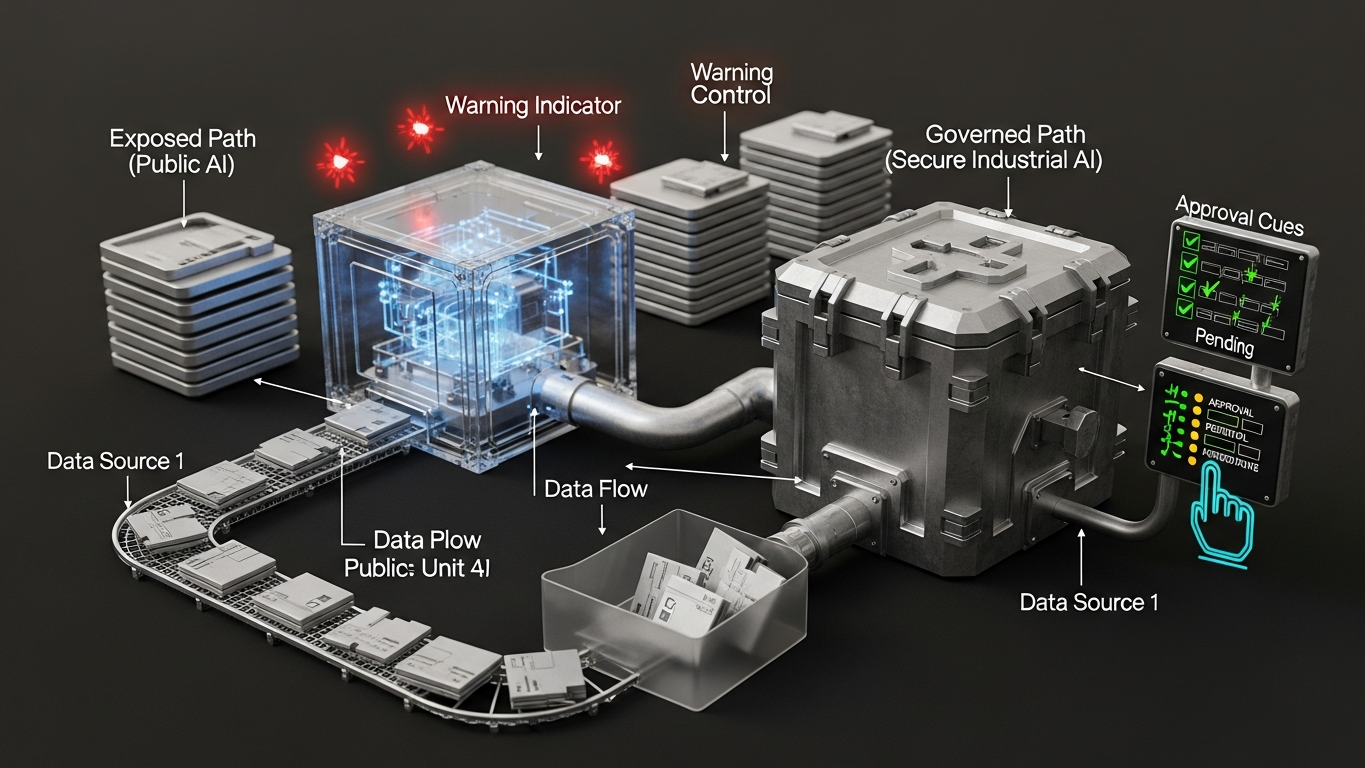

Main promise: industrial AI must protect data, reasoning, deployment boundaries, and human accountability

The browser tab opens in seconds. That is the trap.

For manufacturing, the security question is not whether the model can draft a crisp paragraph. It is whether your organization still holds a defensible perimeter around operational knowledge, decision support, and evidence when work moves through a tool built for mass-market convenience. Public AI becomes a security risk when prompts, uploads, or follow-on actions carry plant-specific facts and the workflow has no enforceable boundary for data path, retention, training use, logging, or accountability. At that moment you are not “trying AI.” You are exporting part of your decision stack into an environment you cannot govern the way you govern MES, ERP, or QMS access.

This article holds that perimeter to a serious standard. How factory data differs from office data, and what upload habits look like in the wild, are treated more directly in companion pieces on data class and public upload behavior. Here, the focus is the control model: what breaks when the perimeter dissolves, and what leadership should demand before industrial work touches intelligence tooling.

A short plant-side moment

Picture a late shift. An engineer pastes a bottleneck summary and rough capacity numbers into a public chat to polish a handover note. Nothing about the interaction feels like a security incident. The text still encodes line reality, supplier timing, and the internal logic of how the plant is trying to improve. Once that content enters a public inference path, the organization must assume it can be stored, logged, processed in jurisdictions you did not choose, and handled under training and support policies you do not operate. Even without a headline breach, you have moved operational reasoning across a boundary you cannot audit like internal infrastructure. The damage is often quiet: not a stolen password, but a slow erosion of control over what the company knows, how it decides, and what it can later prove.

What changes when the perimeter moves

Industrial security teams are practiced with networks, endpoints, and application access. Public AI adds a different egress path: human convenience. When process details, financial assumptions, or failure narratives enter that path, leadership loses predictable answers to questions that matter under stress. Where did the payload go, and who can see it later? Can it influence future model behavior outside a contract you would sign for a plant system? Can you reconstruct who used what in support of a consequential decision?

That is a governance and assurance problem as much as a confidentiality problem. It is also a cultural problem, because the interface feels personal and low stakes even when the content is not.

The decision standard, not a fear stack

Evaluate public AI the way you would evaluate exposing a system of record: by consequence and by evidence. If the workflow touches layouts, costs, supplier position, quality history, or anything that would be awkward to explain to a customer or regulator, public tooling is the wrong default unless you have an explicit, written exception and a disposable-data rule that everyone understands. If the task is generic, non-specific, and fully disposable, with no bridge back to internal systems, public tools may remain in scope for some teams. The industrial failure mode is the gray zone: copy-paste from ERP screens, half-redacted spreadsheets, screenshots with timestamps, and “just this once” uploads that quietly become habit.

What serious industrial AI makes explicit

A perimeter you can defend includes clear statements on where inference runs and where payloads rest; whether client content can train or tune the vendor model; identity, logging, and review expectations for high-impact outputs; and how human approval stays in the loop when stakes rise. If those answers stay vague, assume the risk is higher than the slide deck implies. Convenience is not a control strategy. Classification and boundaries are.

Before you expand usage: confirm the workflow’s data class; confirm the deployment boundary matches that class; confirm training and retention are stated in language operations and security can trace; confirm you can explain the path from input to decision under review.

DBR77 Vector is built as secure industrial intelligence inside the DBR77 ecosystem: proprietary industrial reasoning, deployment options that keep factory knowledge inside buyer-controlled boundaries, client data excluded from model training, and human approval where judgment must remain accountable. The buying shift here is from “can we use AI?” to “does this tool preserve the same perimeter discipline we expect from plant-critical systems?”

Public AI is a security risk for industrial operations when it dissolves the perimeter around operational knowledge without replacing it with architecture, contract, and operating rules you can inspect. The organizations that win the next decade of manufacturing intelligence will be the ones that treat that perimeter as product design, not as an afterthought.

DBR77 Vector gives manufacturers a safer industrial AI path with private deployment options, no training on client data, and stronger domain fit. Review security or Book a demo.