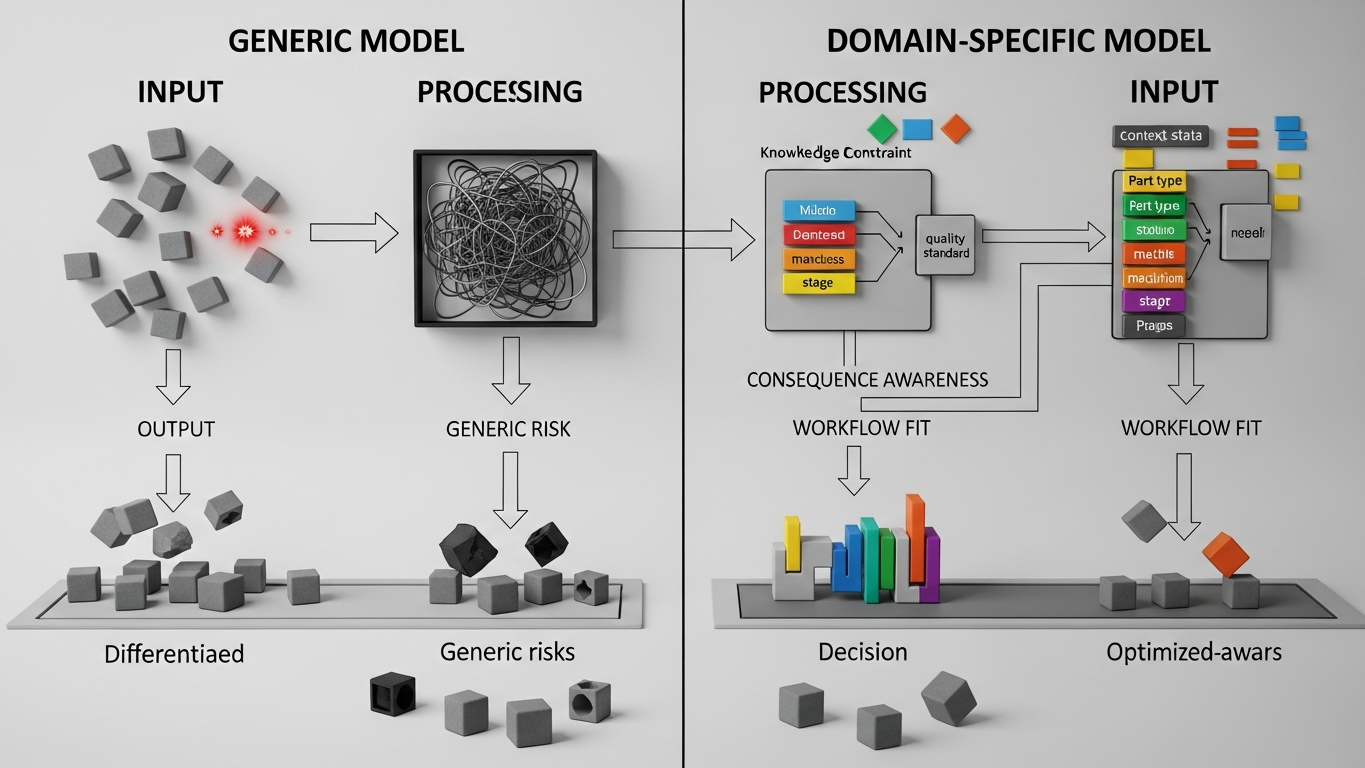

Core problem: many buyers treat headline model size or benchmark prestige as a proxy for manufacturing performance, even when the work is anchored in plant-specific definitions, constraints, and evidence

Main promise: in manufacturing, domain-grounded fit and reference fidelity often matter more than raw scale, because useful answers must align with your internal truth, not only with fluent general language

Headline parameter counts and leaderboard chatter create a simple story: bigger equals better. On a shop floor, that story breaks quickly. Many high-value questions are not won by the largest generic model. They are won by systems that respect your nomenclature, your BOMs and routings, your quality rules, and the way mistakes actually show up in your process.

Bigger generic models improve average performance across broad internet-style tasks. They do not automatically ingest your plant-specific references, your signed-off procedures, or the informal constraints experts carry. For manufacturing decisions, marginal gains from scale often lose to errors that come from missing or misinterpreting your context. Domain-grounded industrial AI is positioned to close that gap by anchoring reasoning in manufacturing and transformation practice and by fitting evaluation to plant-relevant test cases—not to generic completion quality alone.

The model-size myth in industrial buying

The myth sounds like this: if we deploy the largest general model, we have covered “AI for manufacturing.” What that skips is reference dependence. Correctness in plant work is frequently defined against internal masters: part numbers, revision levels, control plans, customer-specific rules, and supplier agreements. A larger model does not grant automatic access to those references unless your architecture deliberately supplies, constrains, and validates them. Scale without fit can increase confidence faster than it increases correctness—and confidence is the dangerous part.

Why domain grounding changes the error profile

In industrial settings, a useful answer is not only fluent. It is stable against questions like whether it aligns with approved routing and inspection points, whether it uses naming and units the way maintenance and quality expect, whether it leaves obvious hooks for SME review where data is thin, and whether it fails visibly when context is missing instead of inventing a smooth bridge. Those behaviors track domain grounding and evaluation discipline more than parameter count.

Generic scale can still sound authoritative and be shallow

A bigger generic model may produce polished language while still missing which document revision is binding for a customer, which deviation path applies when a dimension sits out of spec, or how your ERP or QMS fields encode the constraint in question. Confidence and operational truth diverge. That divergence is costly when teams act on a well-written paragraph that was never checked against plant evidence.

Manufacturing needs plant-calibrated reasoning, not only completion

Industrial AI should help teams reason against their constraints—not only generate smoother text about manufacturing in general. That points to interpretation anchored in manufacturing context, structuring decisions so gaps and conflicts surface early, and test plans that use real internal scenarios rather than demo prompts. Those requirements map to domain fit and internal validation practice. They are only weakly predicted by how large the base model is on public benchmarks.

What to compare instead of headline model size

When you shortlist approaches, stress-test fit rather than prestige. Look at reference fidelity: how well outputs respect your masters, naming, and units without constant correction. Run plant test cases: the same handful of hard internal questions across candidates, and watch who fails silently versus who flags uncertainty. Examine SME load: whether scale reduces expert rework or only speeds up first drafts that still need heavy repair. Ask whether a step up in generic model size changes outcomes on your question set—or mostly changes tone. Keep governance, deployment, and vendor category in their own review; they are not substitutes for domain-grounded reasoning.

Headline size is one input. It is rarely the whole explanation for manufacturing usefulness.

DBR77 Vector is positioned around proprietary industrial reasoning and manufacturing context, not around winning a generic scale race. That positioning assumes buyers will hold industrial AI to plant-relevant evidence and fit, alongside the deployment and training boundaries covered elsewhere in the Vector library.

In manufacturing, domain knowledge and reference fidelity often beat bigger generic models because the hard part is aligning with how your plant actually runs—not sounding intelligent about factories in the abstract. Hold every option to the same internal test cases. Let scale earn its place there, not on a leaderboard alone.

DBR77 Vector gives manufacturers industrial reasoning and stronger domain fit instead of relying on generic model prestige alone. Explore products using Vector or Review security.