Core problem: vendor language around "enterprise-grade," "private," and "secure" often hides unclear training policy, data paths, and deployment facts that matter in factories

Main promise: buyers can translate marketing claims into concrete questions about boundaries, subprocessors, logging, and model governance before shortlisting vendors

“Secure” is not a specification. It is a promise that only becomes meaningful when tied to architecture, contracts, and evidence. For manufacturing buyers, vague security claims are a decision risk—not a comfort signal—because the plant’s worst-case questions are specific: where did payloads go, who could access them, what persisted, and how would we explain that under review?

AI security claims are too vague for industrial buyers when they do not state where data flows, who can access it, whether it trains a model, which deployment modes exist, how decisions are logged, and how incidents are handled. Replace slogans with a written evidence checklist and refuse to advance procurement without answers mapped to your plant systems and data classes. If a vendor cannot answer in writing, assume the control story is incomplete—not secretly excellent.

Why vague claims persist

Generic AI vendors compete on speed and familiarity. Manufacturing buyers compete on uptime, safety, regulatory exposure, and long asset life. The vocabulary overlaps; the requirements do not. That mismatch creates a fog where “enterprise” means different things to different people—unless you force definitions.

Turn slogans into proof requests

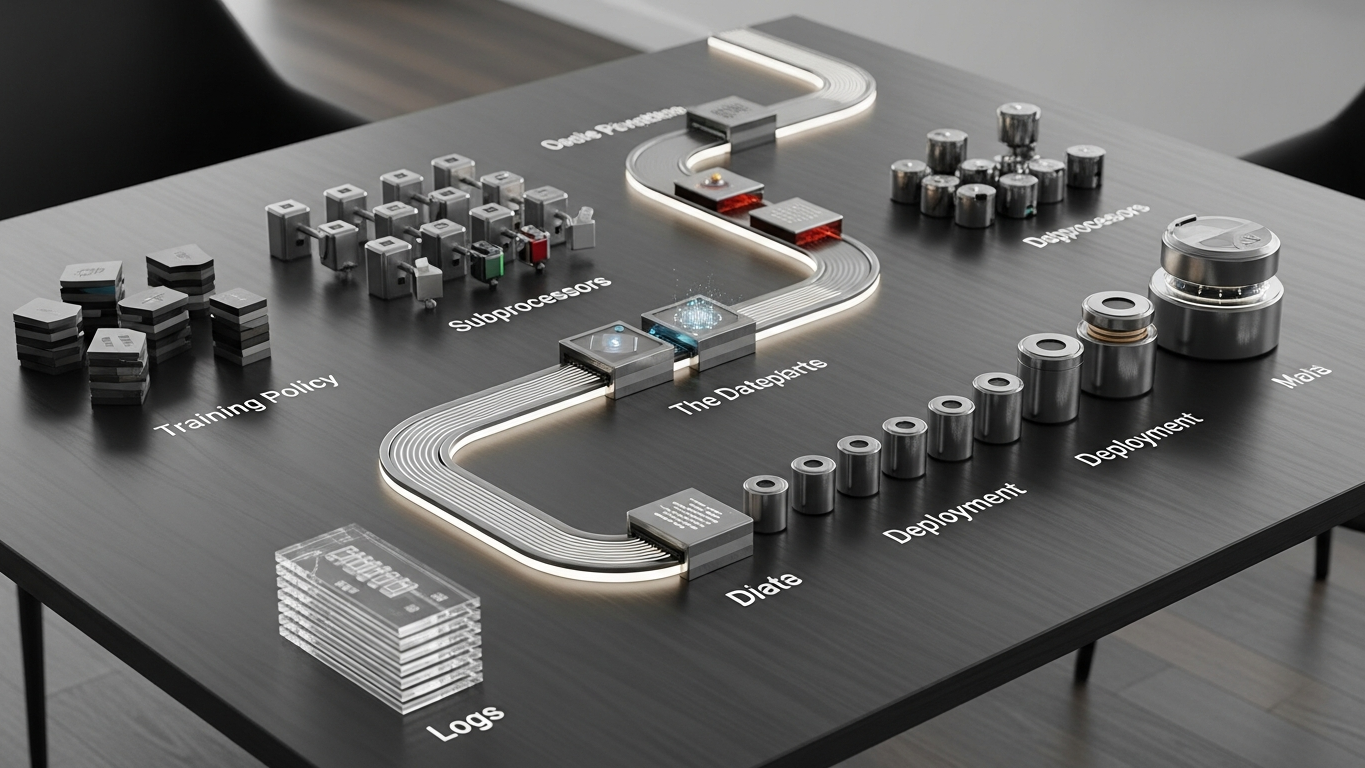

Ask vendors to state every data path from source system to model runtime and back, including admin consoles. Confirm in writing whether client content can be used for training, fine-tuning, evaluation, or human review for product improvement. List subprocessors and regions for storage, inference, logging, and support access. Describe deployment options and what differs technically between them. Provide sample audit artifacts: retention schedules, access logs, change records for model updates. Define incident categories, notification timelines, and forensic cooperation commitments.

If a vendor cannot answer without a follow-up meeting chain, treat that as signal—not as scheduling friction.

What industrial-grade sounds like

When you hear “enterprise secure,” you should hear identity model, segmentation, encryption in transit and at rest, and key custody. When you hear “private AI,” you should hear dedicated runtime boundary, defined egress, and clarity on tenant separation where it matters to your risk model. When you hear “we do not train on your data,” you should hear contract clause, technical controls, subprocessors excluded, and audit rights. When you hear “SOC 2,” you should hear scope letter, systems in scope, frequency, and exceptions. Certificates help. They do not replace architecture narrative.

Treat claims as blocking issues when the product cannot separate development access from production data paths, training policy is described as “usually” instead of contract-defined, subprocessors can change without notice rights you can enforce, or logging cannot support reconstruction of a recommendation that influenced a line change.

Vague security claims fail your checklist the moment they cannot be tied to deployment boundaries, training policy, subprocessors, and incident behavior under pressure. Evaluate Vector with the same bar: proprietary industrial AI trained on factory transformation knowledge, on-premise / private API / isolated deployment options, client data excluded from model training, and reasoning aimed at industrial work rather than generic chat—so procurement compares facts, not adjectives.

Industrial AI procurement is not a taste test. It is infrastructure selection. Demand language that maps to deployment boundaries, data sovereignty, training policy, auditability, and incident response—then compare vendors on those facts.

Plant checkpoint

Treat “When AI Security Claims Are Too Vague for Industrial Buyers” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector supports evidence-led evaluation with clear deployment boundaries and a no client-data training posture aligned to industrial governance. Review security or Book a demo.