Core problem: teams oscillate between banning AI or trusting it too much because they lack a simple decision rule for approval gates

Main promise: manufacturers can separate low-risk AI assistance from high-consequence decisions using a consequence-based approval matrix tied to systems and spend

Human approval is not a philosophical stance. It is a control you apply where mistakes are expensive or irreversible. Manufacturing needs a middle path: fast enough to be used, strict enough to be safe, and explicit enough that night-shift behavior matches day-shift intent.

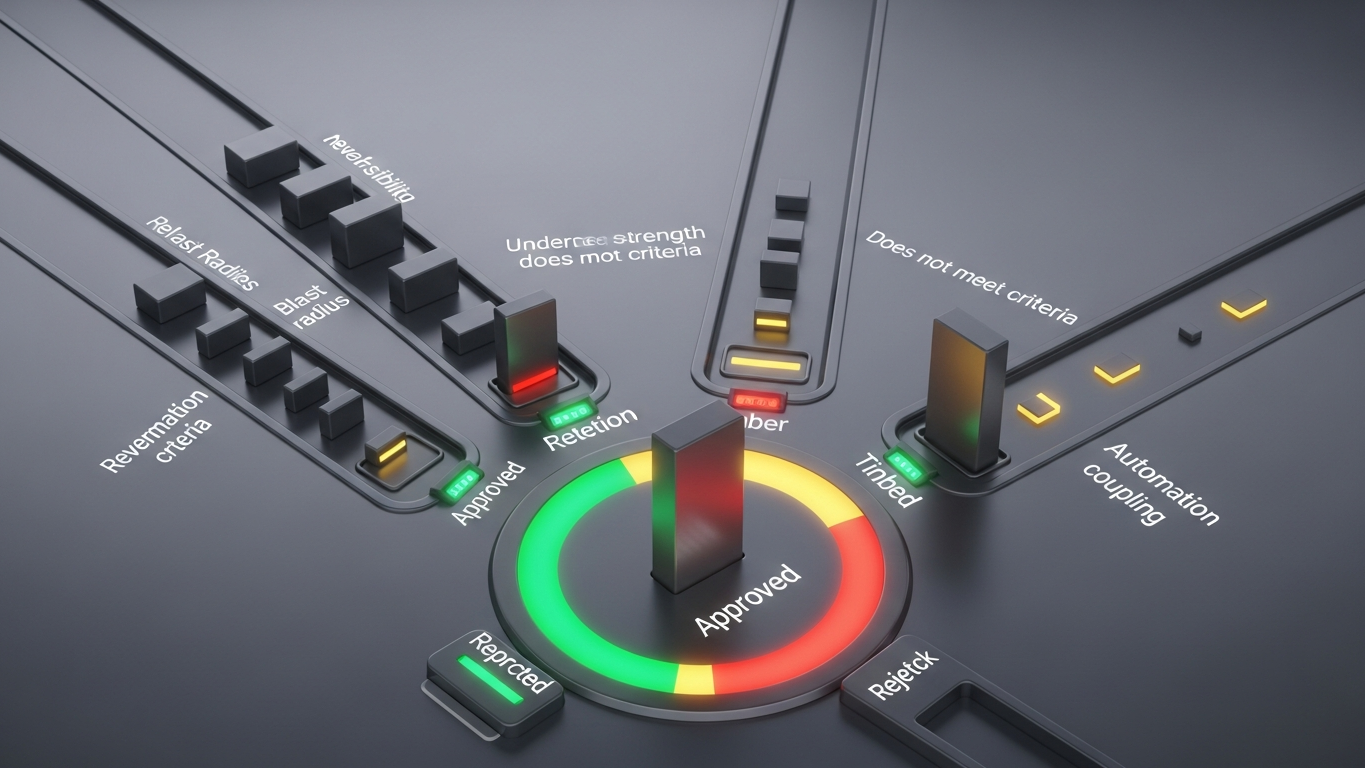

Require human approval when an AI output can change physical reality, financial commitments, customer quality promises, safety systems, regulated records, or production schedules without an easy rollback. Approval is usually unnecessary when the output is exploratory, internal-only, easily verified, and cannot trigger automated actions or external commitments. The failure mode to avoid is treating both classes the same—either slowing everything down or trusting everything too much.

Why a simple rule beats blanket policies

Blanket bans slow adoption. Blanket trust creates incidents. A consequence-based matrix turns debates into classification: what are we doing, what can go wrong, and what record do we need if someone asks later?

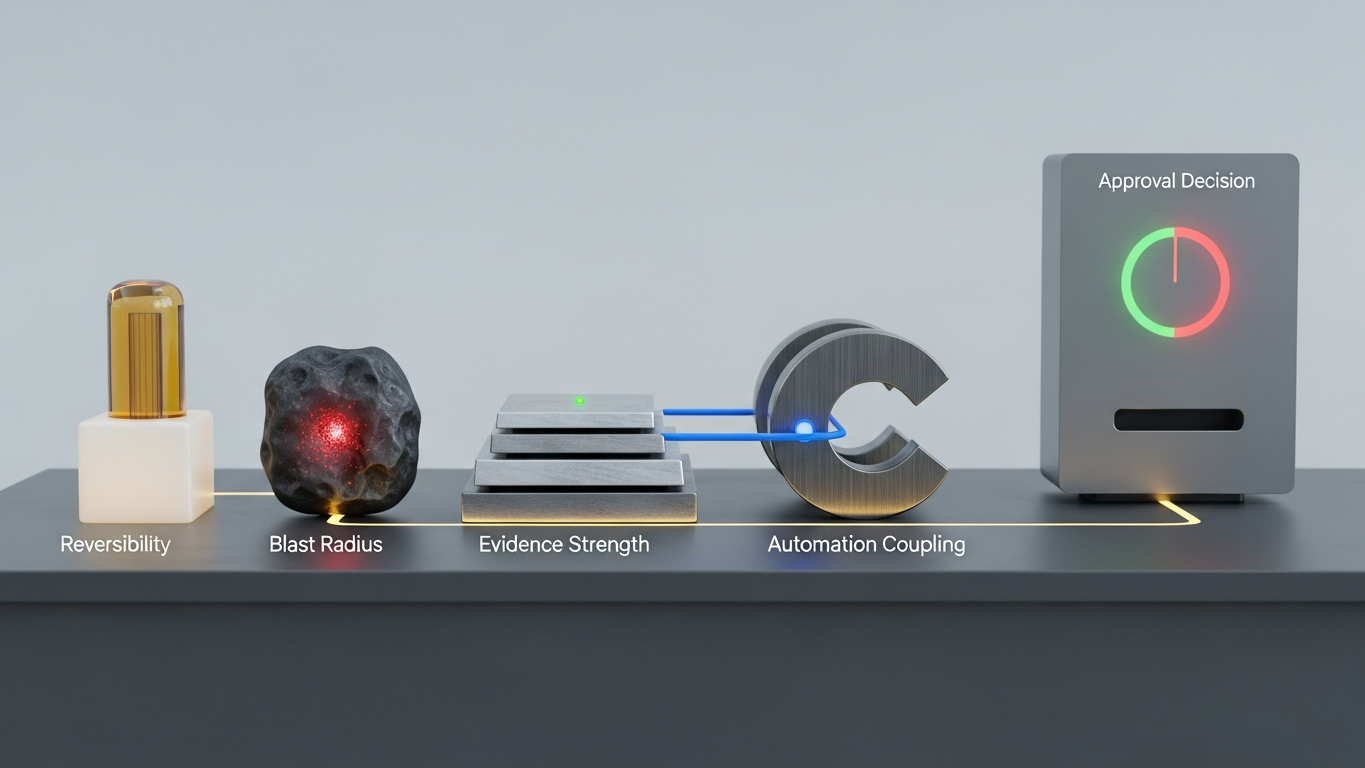

The approval matrix: four questions

Ask about reversibility: can you undo the effect in minutes without customer or regulatory harm? Ask about blast radius: does a mistake propagate across lines, sites, or suppliers? Ask about evidence requirements: will an auditor ask who approved this and why? Ask about automation coupling: does the output feed a system that executes without a second look? If reversibility is low, blast radius is high, evidence demand is high, or automation coupling is high, default to approval.

Examples where approval is usually required

High-consequence cases often include changes to BOMs or sourcing decisions that affect cost or lead time, quality disposition instructions tied to shipments, maintenance actions that can stop a line or compromise safety interlocks, updates to customer-facing certificates or compliance documentation, and scheduling changes that break committed delivery expectations. These are not anti-AI positions. They are proportionate controls.

Examples where approval is often optional

Lower-consequence cases often include drafting internal meeting summaries without operational claims, generating training quizzes from public procedures, brainstorming improvement ideas that still require engineering validation, and summarizing a document the human already owns and will re-read. Even here, discipline matters: teams should still avoid uploading sensitive data into the wrong environment.

Where industrial AI should make approval easy, not invisible

Good industrial AI design separates recommendations from executable actions, shows rationale snippets and source context where possible, supports role-based reviewers, and logs decisions for later reconstruction. The goal is speed with accountability, not speed without trace. Chat-first tools encourage improvisation; workflow-first industrial tools encode where the world changes. Buyers should prefer vendors that understand that difference.

Approval intensity should track impact, not headlines. Vector aligns with that discipline: industrial reasoning inside the DBR77 ecosystem with clear deployment boundaries, no training on client data, and room to pair high-stakes decisions with human judgment where your matrix says it belongs—rather than treating every output as autonomous.

Approval is not about distrusting the model. It is about matching control intensity to impact. Manufacturers that publish a clear matrix reduce shadow IT and reduce incidents at the same time.

Plant checkpoint

Treat “When AI Outputs Need Human Approval and When They Do Not” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector supports governed industrial workflows with clear deployment boundaries and reasoning oriented to factory decisions rather than unconstrained chat autonomy. Book a demo or Explore products using Vector.