Core problem: teams default to public AI for speed, then discover data exposure, weak governance, and deployment mismatch when work touches real factory knowledge

Main promise: manufacturers can decide early whether convenience is appropriate or whether private industrial AI is the responsible default for a given workflow class

Public AI is often the fastest way to get a draft, a summary, or a first-pass answer. That speed is real—and seductive. The question for manufacturing is whether the workflow belongs in that convenience zone at all, or whether “fast” is quietly trading away boundaries your organization would never accept for a plant system.

Choose private industrial AI when inputs, outputs, or follow-on actions touch sensitive operational data, supplier or customer context, regulated obligations, or anything that would be painful to explain in an audit. Public AI convenience is more defensible when the task is generic, non-specific, and fully disposable, with no linkage to systems of record. The industrial failure mode is not the obvious breach. It is the gray habit: copy-paste from internal tools, half-redacted exports, and “just this once” uploads that become standard practice.

Why the default matters

Manufacturing organizations rarely fail because they lack access to a chat interface. They fail because convenience habits spread faster than classification rules. Once layouts, costs, constraints, or failure narratives live inside public tools, the damage is often reputational and compliance-shaped—not only technical. Leadership discovers the program’s real architecture only when someone asks for evidence.

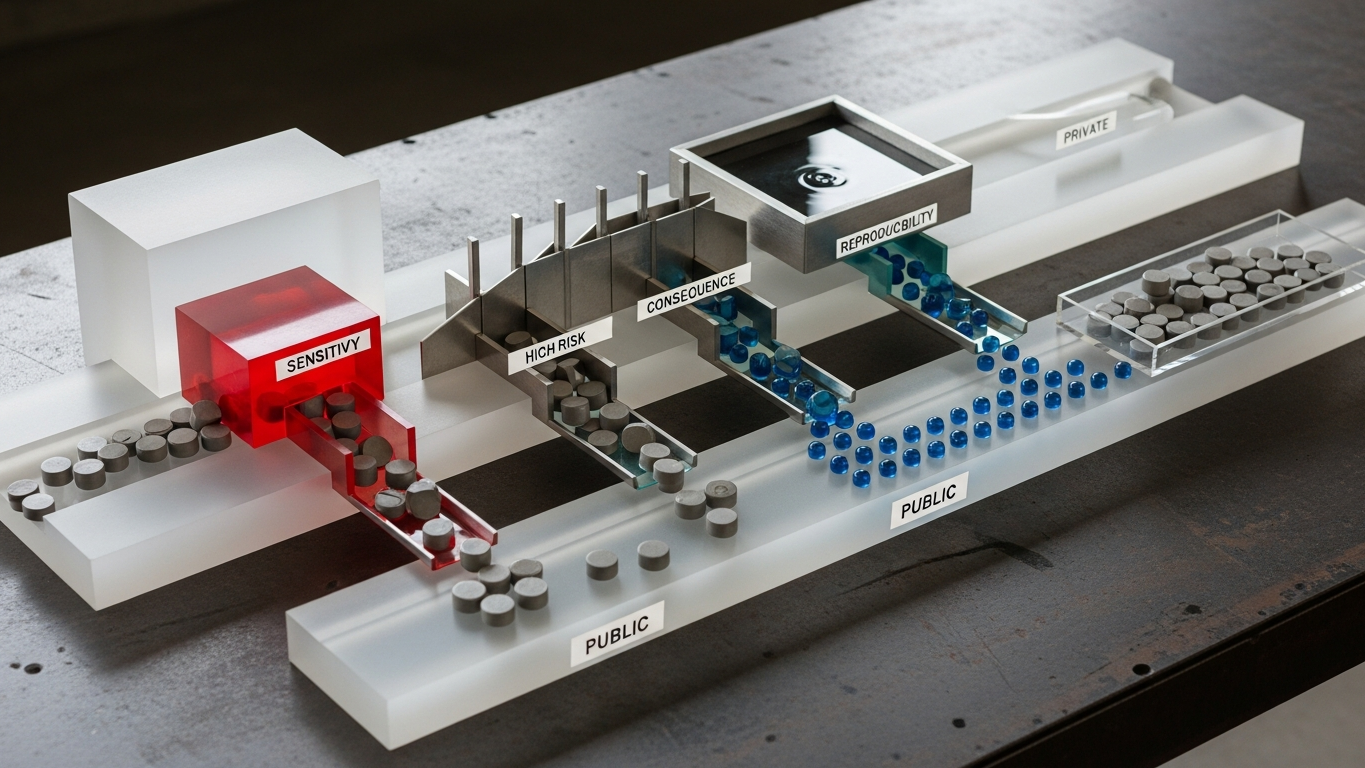

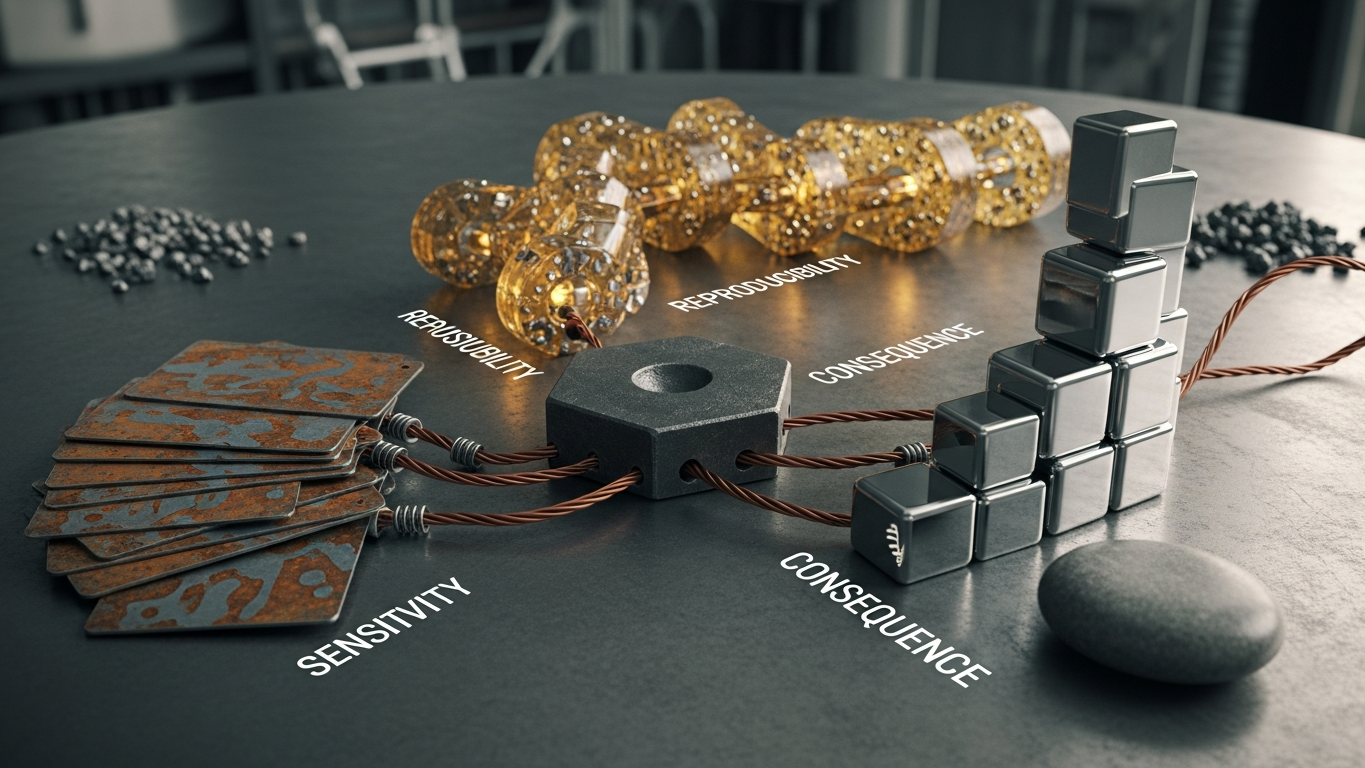

A simple decision filter

Use three lenses. Data sensitivity: would a security team object if this content appeared in the wrong place? Consequence: if the output is wrong, does it change spend, safety, quality, or customer commitments? Reproducibility: do you need a traceable decision record tied to roles and approvals? If any lens reads high, private or controlled deployment should be on the table—not as fear, but as proportionate control.

When public convenience is still reasonable

Public tools can be acceptable for generic writing that contains no plant-specific facts, public-domain research where sources are verified independently, and training-style exploration that never receives confidential uploads. Even then, operational discipline matters: teams should not blur the boundary through copy-paste from internal systems or screenshots that re-identify the plant.

When private industrial AI is the better default

Private or isolated deployment is usually the right class when work involves process know-how and constraint logic, equipment or supplier specifics, financial or capacity signals, quality and customer-facing commitments, or integration paths toward MES, ERP, QMS, or ticketing systems. This is classification, not catastrophizing: you are choosing a tool class that matches the payload class.

How deployment boundaries change the trade-off

Private industrial AI should make the following explicit: where the model runs, how data moves, whether client data can train the vendor model, and how access is logged and reviewed. Public convenience rarely offers that depth at the level manufacturing needs—because it was not designed to be a system of record for operational truth.

When sensitivity, consequence, and audit expectations outweigh public-tool convenience, the comparison set shifts to industrial intelligence with explicit deployment boundaries, manufacturing-oriented reasoning, and no use of your operational data to train a shared model. Vector sits in that DBR77 ecosystem layer on purpose: proprietary industrial AI trained on factory transformation knowledge, available on-premise, via private API, or in isolated deployment patterns, so procurement can evaluate control before habit.

Private AI is not an aesthetic choice. It is the right default when manufacturing workflows carry real sensitivity, consequence, and audit expectations. Public AI can remain useful—but only inside a boundary that procurement, security, and operations agree is safe.

Plant checkpoint

Treat “When a Manufacturer Should Choose Private AI Over Public AI Convenience” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector offers private, on-premise, and isolated deployment paths so sensitive manufacturing workflows do not depend on public-model convenience alone. Review security or Explore products using Vector.