Core problem: many AI products look promising in demos but are not actually ready for industrial deployment where control, governance, and consequence matter

Main promise: manufacturers should define deployment-ready AI through operational fit, not only model capability

A model can look impressive and still be unready for industry. That gap matters because manufacturing does not reward demos. It rewards systems that can run under pressure: when data is incomplete, when stakes are high, and when someone will ask how a decision was supported.

In manufacturing, deployment-ready AI means more than “the demo worked.” It means the system can operate inside real industrial constraints—where the failure mode is not embarrassment, but scrap, downtime, customer exposure, or a quality investigation that needs a coherent record.

Why demos are not enough

Demos usually show speed, interface quality, fluent output, and narrow use-case success. Those things matter. They do not prove the system is ready for serious industrial deployment. Readiness is also about what happens when the tool touches real payloads, real roles, and real systems—especially when outputs approach execution.

Deployment-ready means the operating model is ready

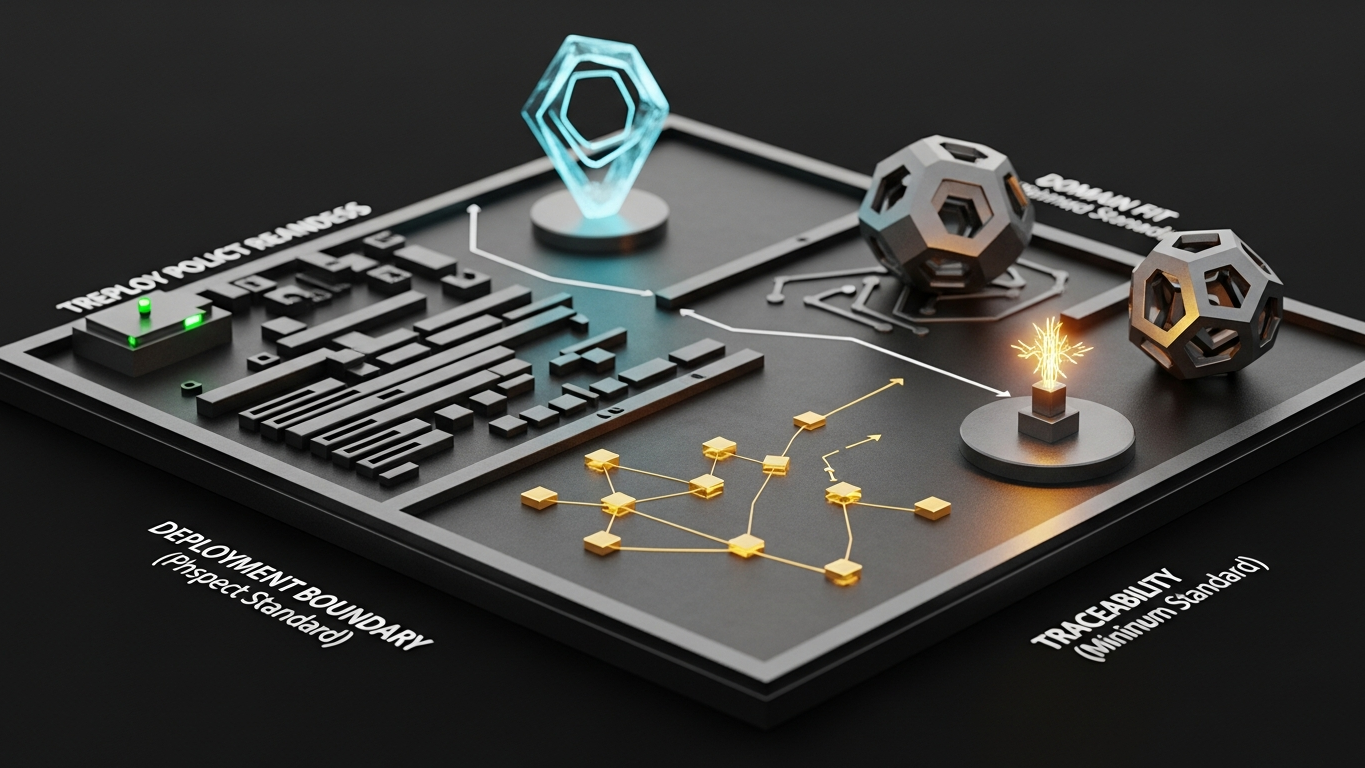

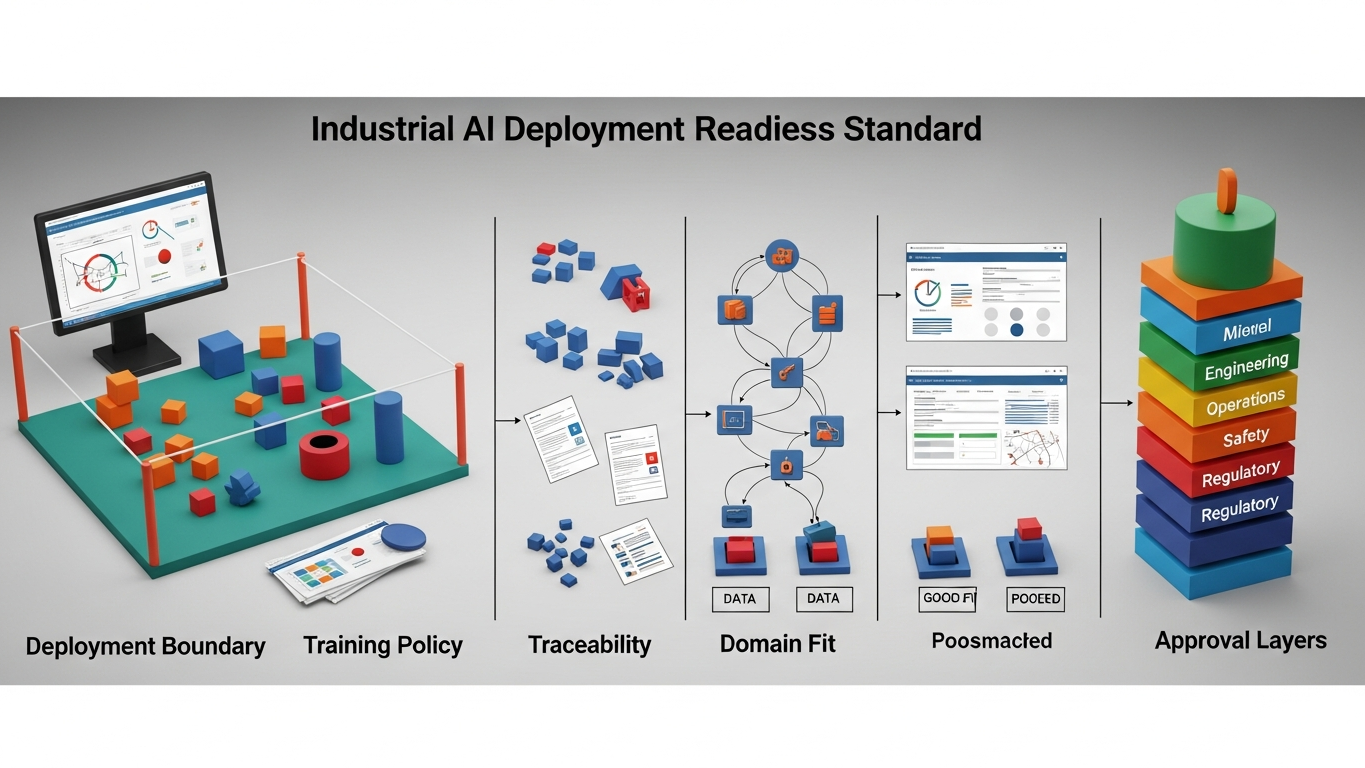

In industry, deployment readiness should include the right deployment boundary, clear training policy, strong access control, traceability, and human approval where needed. Without those layers, the model may be useful in theory and weak in practice—because the organization cannot trust it, sign it off, or explain it later.

Industrial readiness includes consequence fit

A model is not deployment-ready just because it can answer well. It also has to fit workflow consequence, data sensitivity, governance requirements, and operational trust levels. That is what separates industrial deployment from casual AI adoption: the surrounding control plane, not the chat window.

Why readiness is often overstated

Vendors often present technical possibility as deployment readiness. Those are different things. A system may be technically deployable while still being weak on approval design, security clarity, auditability, or domain fit. That is not enough for manufacturing—not because the technology is “bad,” but because the program will fracture under the first serious review.

What buyers should verify before calling AI deployment-ready

Manufacturers should confirm the deployment model fits the control requirement, client data do not train the model, outputs can be reviewed and traced, the system reflects industrial reasoning, and high-impact actions keep the right approval layers. That is the minimum viable readiness standard: boring on paper, decisive in practice.

Readiness question: if this output influenced a line or quality decision, could your team reconstruct the story without heroics?

DBR77 Vector is positioned around industrial AI readiness through private deployment options, no training on client data, industrial reasoning, stronger governance expectations, and human approval over critical decisions. That makes readiness about operational reality, not only AI ambition.

Deployment-ready AI for industry is not defined by model fluency alone. It is defined by whether the system can operate inside the control, governance, and consequence levels that manufacturing actually requires.

Plant checkpoint

Treat “What Makes an AI Model "Deployment-Ready" for Industry” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion. Finally, treat ambiguity as debt: every unanswered question about data paths, training defaults, or approval routing is something your future self will pay for under time pressure—usually during an audit, an incident, or a rushed rollout.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector helps manufacturers reach real industrial AI readiness through private deployment, stronger governance, and controlled decision support. Review deployment options or Review security.