Core problem: teams talk about "private AI" without a shared definition of what the deployment boundary actually protects, which creates false confidence during pilots

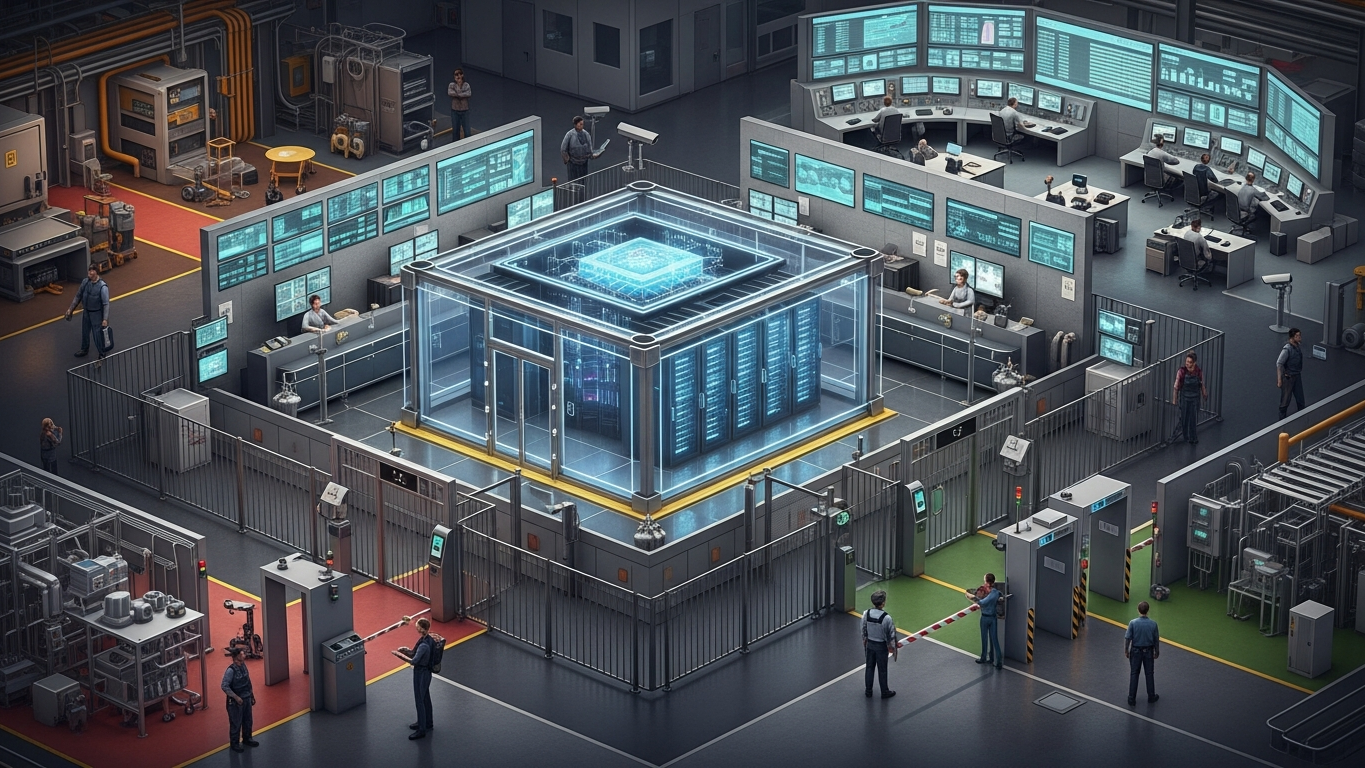

Main promise: manufacturers can define a deployment boundary as a concrete set of controls covering runtime location, data paths, access, egress, retention, and integration rules

“Private” is not a mood. It is a boundary you can explain to security, operations, and the board when someone asks what is live, where data went, and who could touch it. A manufacturing AI deployment boundary should include where the model runs, which networks it can reach, how data enters and exits, who can access it, what is logged, how long data persists, what training or improvement loops are allowed, and how factory integrations are scoped and monitored. If one of those elements is undefined, the boundary is incomplete—and incomplete boundaries fail under stress.

Why boundaries beat brand claims

Buyers hear overlapping words: private cloud, VPC, dedicated instance, enterprise tier. Those labels do not automatically mean the same control posture. A boundary definition forces precision. It also prevents procurement from “solving” risk with vocabulary.

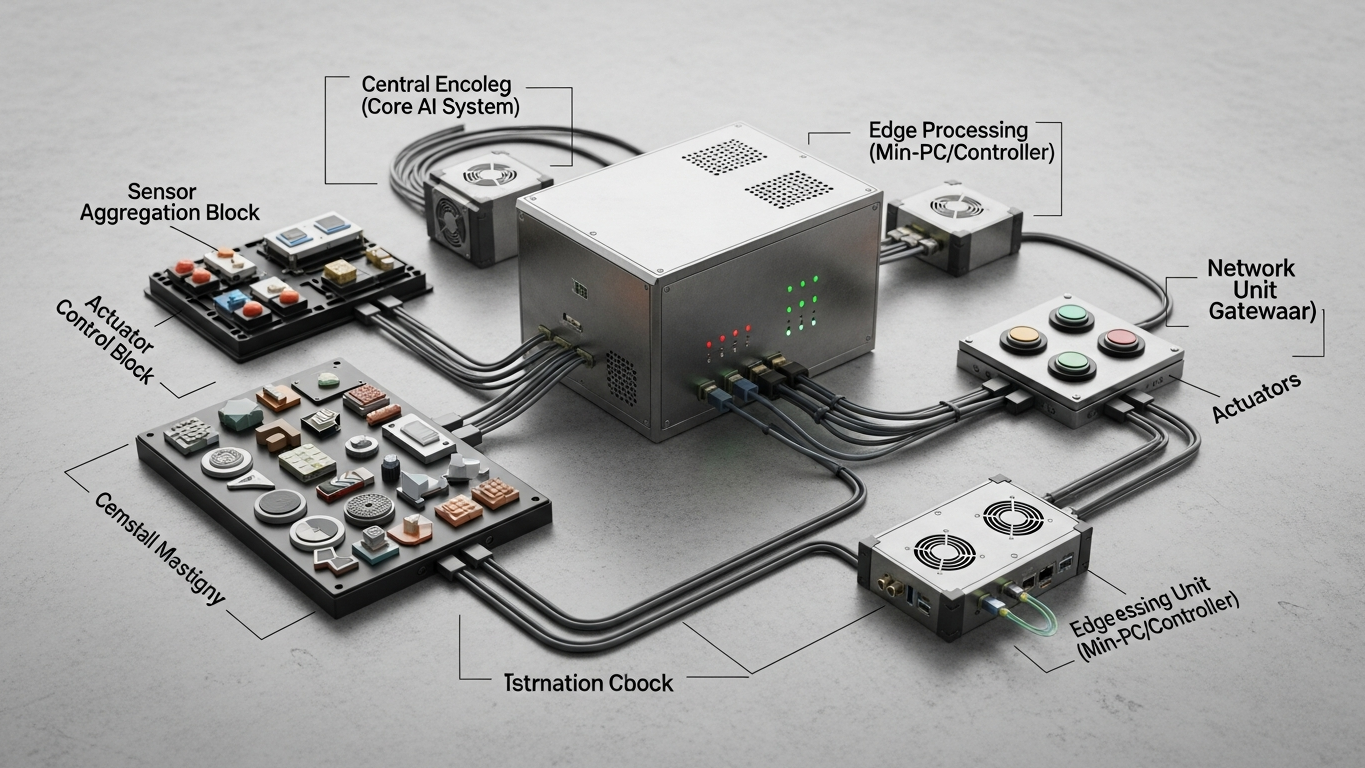

The boundary stack

Runtime location should be explicit: on-premise, customer-controlled private environment, vendor-managed tenant with contractual isolation, or another stated pattern. Network reach should define allowed and denied connectivity, including outbound paths and OT/IT separation expectations. Ingress and egress data paths should document what users and systems can send in, whether attachments or webhooks leave the boundary, and how secrets are handled—egress is where many “private” stories quietly weaken.

Identity and access control should include SSO and MFA expectations, role separation between admins and operators, and break-glass procedures. Logging, monitoring, and retention should specify what events are logged, who can read logs, retention windows, and export to SIEM. Training and model improvement policy should state whether client prompts or documents can be used for vendor model improvement, whether fine-tuning happens only inside the customer environment, and how evaluation data is segregated from production.

Factory integration scopes should be explicit for APIs connecting to MES, ERP, QMS, or ticketing: least-privilege scopes, change control, and test versus production separation.

Weak language versus strong language

Weak language sounds like “we take security seriously,” “enterprise-ready,” and “your data is protected.” Strong language sounds like “client data does not train the model, enforced by stated controls,” “no outbound data path except named exceptions,” and “logs retained for a defined period, exportable in a defined format.” Buyers should prefer the second class—because it can be tested.

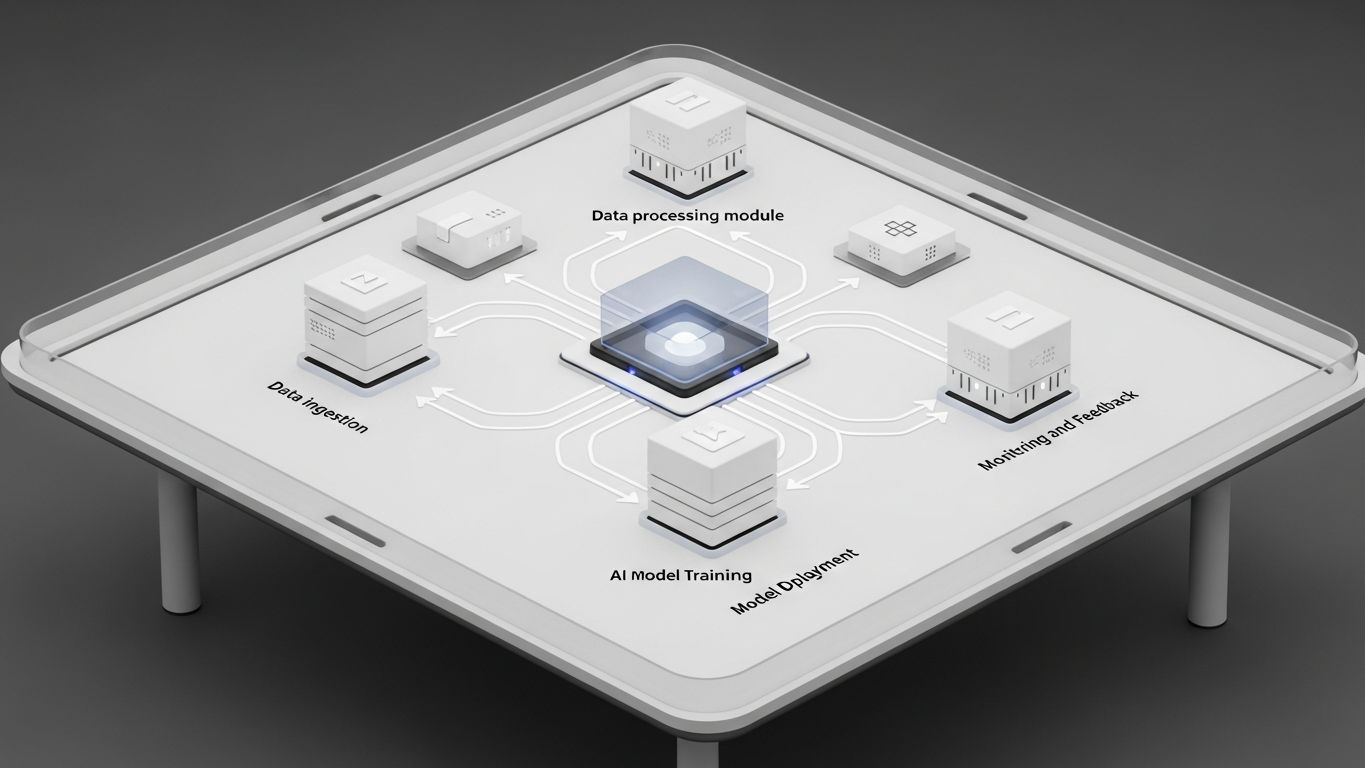

In procurement, turn the boundary stack into a requirements table. Score vendors as supported, supported with conditions, not supported, or roadmap only. Roadmap-only items belong in risk registers, not silent assumptions.

The boundary stack you define is how you separate real architecture from slide-ware before money and payloads move. Vector is described in those terms inside the DBR77 ecosystem: proprietary industrial AI trained on factory transformation knowledge, with on-premise, private API, or isolated deployment choices and an explicit posture that client data does not train the model.

A deployment boundary is the contract between your risk model and your AI architecture. If you cannot state it in operational terms, you are not ready to scale usage beyond experiments.

Plant checkpoint

Treat “What an AI Deployment Boundary Should Include in Manufacturing” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector is designed around explicit industrial deployment boundaries, including private and on-premise options and a no-client-data-training posture. Explore products using Vector or Review security.