Core problem: AI-to-factory integrations are often sold as simple APIs, while real risk sits in credentials, write authority, data lineage, and failure modes

Main promise: CTOs can use a focused question set covering identity, scope, side effects, monitoring, rollback, and ownership before any production coupling

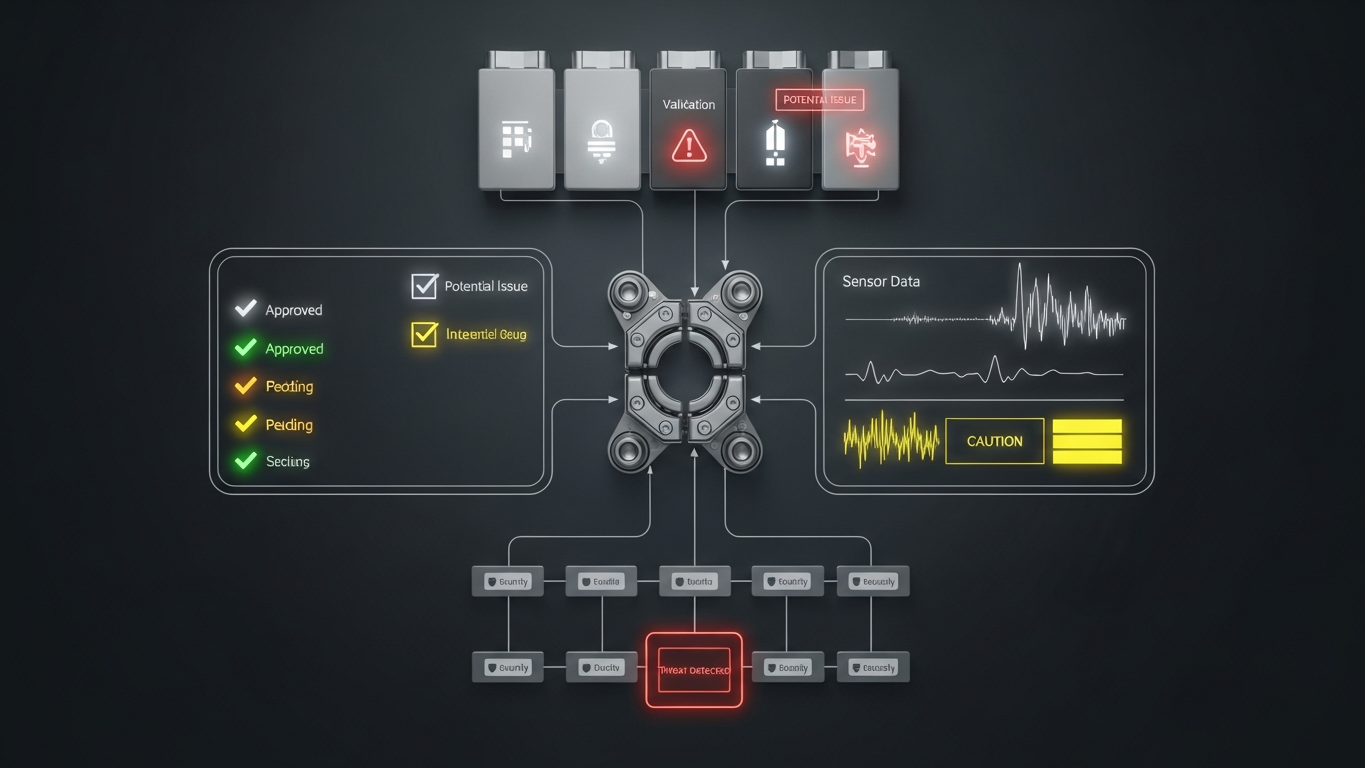

Connecting AI to factory systems is not a feature flip. It is an expansion of operational risk—the moment abstraction ends and state can change. Before coupling AI to MES, ERP, QMS, CMMS, or similar systems, the CTO should confirm identity and least-privilege scopes, read versus write posture, idempotent behavior, failure and timeout handling, audit logs, change control, rollback paths, incident ownership, and whether outputs remain recommendation-only until explicitly approved. If those topics are thin, delay coupling—not because innovation is bad, but because unowned risk is bad.

Why integration is the real inflection point

Many AI debates stay abstract until a system can change records, schedules, or quality state. Integration is where “assistant” becomes infrastructure. It is also where security and operations teams stop asking about demos and start asking about blast radius—which is exactly the conversation you want while you still have options.

Identity and access

Ask which service accounts exist and who owns rotation, how secrets are stored and injected, whether access is scoped to the minimum API surface, and how admin actions are separated from operational calls. Integration identities should be as disciplined as any other plant-adjacent integration—not “the AI user.”

Read versus write

Ask whether the integration can write or only read. If writes exist, which objects can change? Are writes behind explicit human approval? Is there a dry-run or simulation mode? Read-only advisory is easier to defend; write paths demand stronger gates and clearer ownership.

Side effects and blast radius

Ask what happens if the model recommends the wrong action, whether partial failure can leave systems inconsistent, and whether transactions are bounded and retry-safe. The goal is not perfect models. The goal is controlled failure modes.

Observability

Ask what logs exist for each API call, whether logs can correlate AI events to manufacturing records, and what metrics indicate drift or rising error rates. If you cannot see integration health, you cannot operate it.

Change control and environments

Ask how you promote from pilot to production, how model or prompt updates are versioned, and whether configuration can roll back independently of plant releases. AI systems change often; factories require predictable promotion.

Ownership and incident response

Ask who is paged when integrations fail, what the vendor responsibility boundary is, and what recovery time is tolerable for your line class. Unowned integrations become everyone’s problem at the worst moment.

Read-only advisory is easier to defend. Closed-loop assistance demands stronger gates. Buyers should name which mode they are in and prevent silent drift between them.

Question sets still need named owners and written answers; the AI layer does not replace integration discipline. Vector is positioned as industrial AI inside the DBR77 ecosystem with deployment options you can thread through the same segmentation, identity, and logging standards as other factory-adjacent systems, manufacturing-oriented reasoning instead of generic chat, and client data excluded from model training.

The CTO job is to keep innovation from becoming unowned operational risk. Ask integration questions early, in writing, with owners. If the answers are strong, coupling can proceed with confidence.

Plant checkpoint

Treat “What a CTO Should Ask Before Connecting AI to Factory Systems” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector supports CTO-led evaluations with explicit deployment boundaries, no client-data training, and industrial reasoning suited to governed coupling with factory systems. Book a demo or Review security.