Core problem: many buyers compare on-prem and cloud AI through infrastructure preference instead of decision risk, governance, and deployment fit

Main promise: the right deployment model depends on control requirements, not trend pressure

The on-prem versus cloud debate is often dressed up as modern versus cautious. For manufacturing, that is the wrong axis—and it leads to expensive mistakes on both sides. Some teams choose a label to signal seriousness without staffing the operating model. Others choose cloud by default because it feels fast, then discover that “fast” does not survive the first serious security review when payloads touch real plant knowledge.

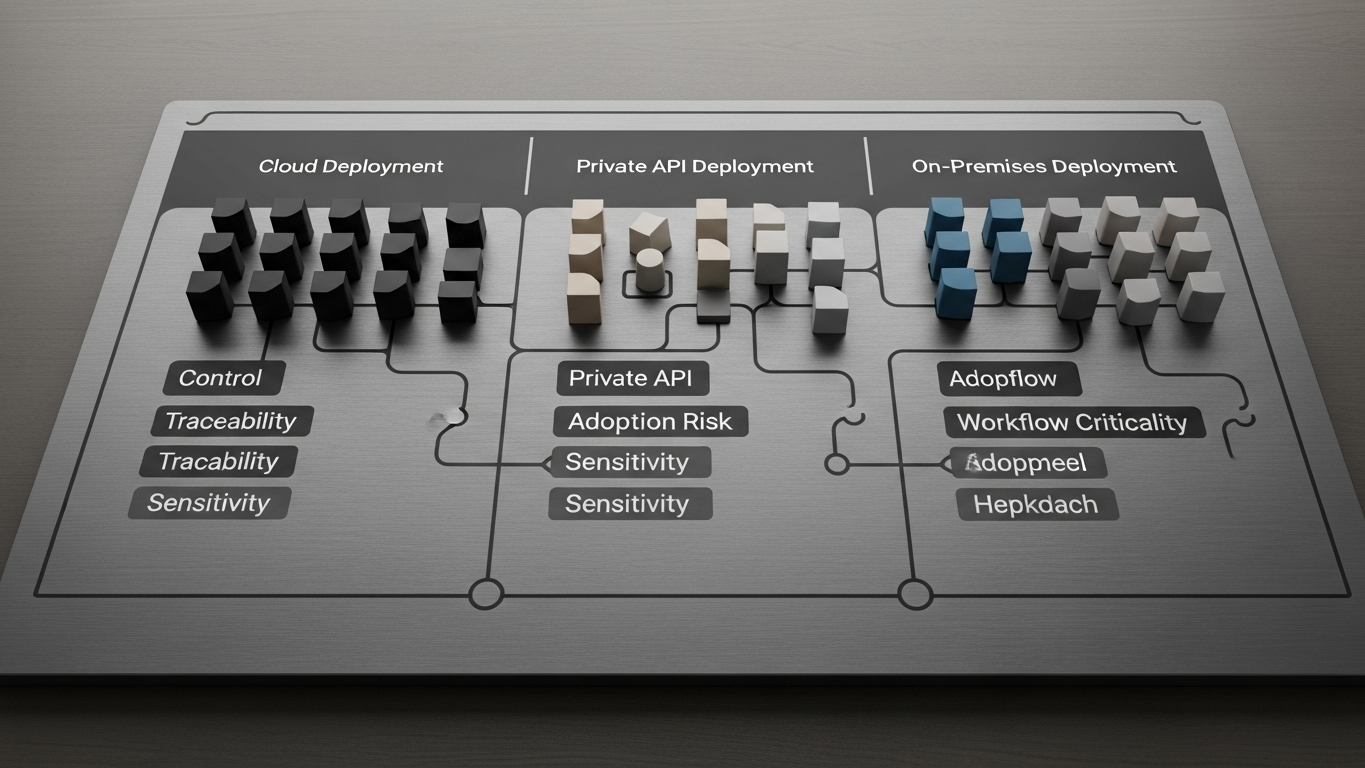

Industrial buyers should compare deployment modes by fit: data sensitivity, required control boundary, traceability, and the workflows you intend to enable. Infrastructure fashion is a weak proxy for any of that. Choose cloud-oriented AI when the use case is narrow, the data class is low, and your provider can show in writing how storage, access, logging, and subprocessors match your policy. Choose on-prem, isolated tenant, or tightly governed private API patterns when the workflow touches proprietary process knowledge, regulated or customer-committed data, or decisions that need a reconstructable record tied to your own estate.

Organizational drag from a poor fit—approvals that never clear, teams avoiding high-value use cases—is real, but it is a different lens from the technical fit question and is covered on its own in the deployment-cost discussion.

Why control beats slogans

Manufacturing AI can touch process logic, incident context, cost and capacity signals, and engineering judgment. Deployment is therefore a control choice: where payloads live, who administers runtime, and what you can prove under review. Cloud can be the right answer when the workload is well bounded and the vendor’s boundary story is concrete. On-prem or isolated patterns earn their cost when the organization needs the runtime inside a fence it operates, or when data-class rules leave no credible alternative.

The decision is not about virtue. It is about whether the architecture matches the consequence of being wrong.

A compact decision filter

Use a simple gate before you argue about GPUs and invoices. If inputs include layouts, recipes, yields, supplier terms, or customer-specific quality signals, you are usually in territory where boundary clarity matters more than headline elasticity. If outputs inform CAPA, release decisions, or capital requests, traceability expectations rise. If geography and policy constrain where data may rest or who may process it, your shortlist should be driven by evidence, not by a preference for “cloud-native” aesthetics. If operations expects you to show your own perimeter the way you do for other plant-adjacent systems, shared-responsibility models need to be spelled out the way you spell them out for ERP extensions.

Use this as a gate, not as a religion. Hybrid setups are common; the requirement is an explicit boundary story, not a label.

What buyers often get wrong

Weak comparisons sound like “cloud is faster” or “on-prem is safer.” Stronger comparisons ask what must never leave your intended environment, what logging and retention you need to defend a line or quality decision later, and who can administer the stack and approve model or configuration changes. Those questions belong in the same conversation as MES and ERP access reviews, not only in a generic cloud strategy deck.

What to verify before you commit

Before you commit, verify the data classes the workflow will touch—including accidental paste behavior from ERP or QMS. Map the written data path from source system to model runtime and back, including support and admin access. Confirm training policy: whether prompts, documents, or outputs can train or tune vendor models. Confirm whether your security team can map the deployment to existing segmentation and logging standards. Confirm whether high-impact outputs have a defined review path in your organization, independent of where the model runs.

If the vendor cannot answer in operational language, the deployment mode is not ready for industrial use.

DBR77 Vector supports manufacturing buyers who need deployment flexibility without trading away industrial discipline: on-premise, private API, and isolated patterns, client data excluded from training, reasoning oriented to factory transformation work, and human approval where decisions carry consequence. Fit here means the runtime can be aligned to the control bar your data class already implies.

On-prem versus cloud AI for manufacturing is a question of deployment fit against sensitivity, traceability, and policy, not of tribal preference. Choose the boundary you can defend, then demand the same evidence standard you would use for any other plant-critical system.

DBR77 Vector gives manufacturers private deployment options and stronger control over how industrial AI is used in operational environments. Review deployment options or Review security.