Core problem: buyers focus on the primary vendor logo while embeddings, moderation, logging, or analytics hops silently cross extra legal and technical boundaries

Main promise: a repeatable subprocessor and data-path review exposes every hop from plant systems to storage and back

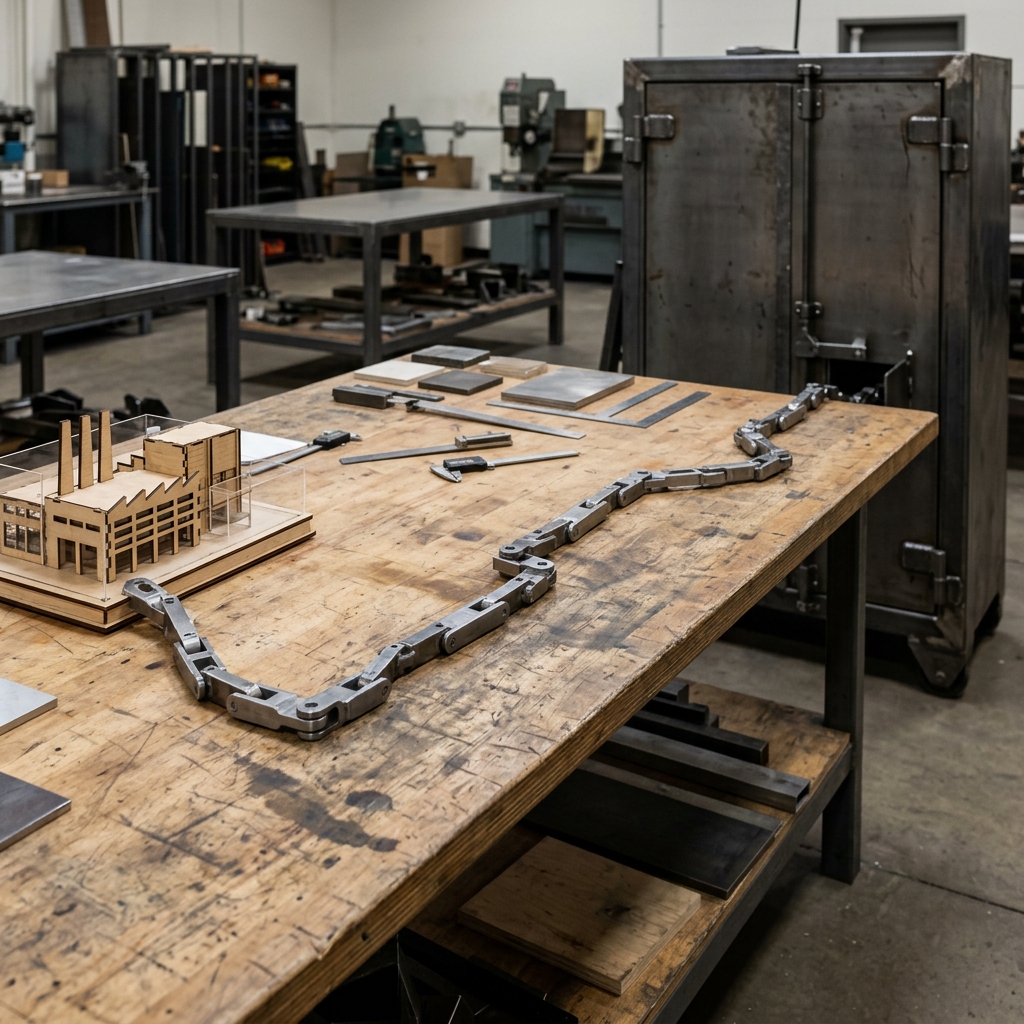

You are not buying one company. You are buying a chain—and manufacturing diligence has to follow the chain the way it follows integrations into ERP and MES. If the chain is incomplete on paper, it is incomplete in practice, no matter how polished the primary vendor’s homepage is.

Evaluate AI subprocessors by listing every legal entity and service in the inference and support path, mapping data classes at each hop, confirming residency and encryption, comparing training prohibitions contractually and technically, testing change notification, and requiring a diagram that matches production configuration. Update the register when integrations or model routes change. Hidden hops are where “private” stories quietly weaken.

A disciplined subprocessor pass

Request the full subprocessor list, including services toggled by feature flags. Mark each service as inference, logging, support access, billing telemetry, or security scanning. For each hop, record data types, retention, encryption, admin access model, and region. Cross-check against your procurement annex non-negotiables. Run a configuration review in a test tenant to catch routes that marketing diagrams omit.

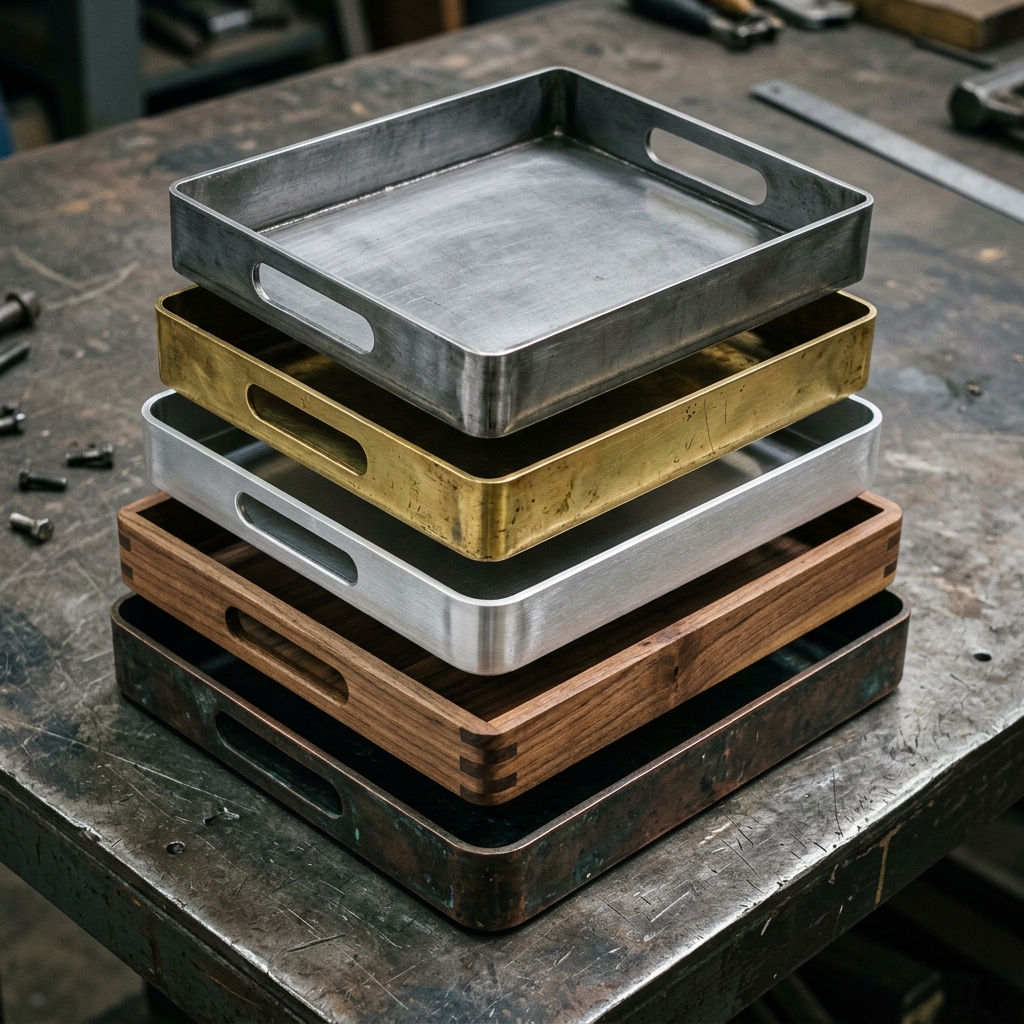

Data path layers to diagram explicitly

Plant to AI edge: connectors, brokers, API gateways; authentication method and secret storage. Model runtime: hosting party, compute location, burst scaling behavior. Post-processing: moderation, formatting, citation tooling if present. Persistence: transcript stores, vector stores, ticket attachments. Observability: metrics vendors, SIEM forwarding, support screen sharing tools.

Weak answers sound like “trust us” on payload visibility, “secure cloud” without region lists, “we care about privacy” without training traffic separation, and “standard updates” without notice windows and re-approval paths. Strong answers name roles, show RBAC models, map regions and subsystems, tie training exclusions to controls, and define change governance you can enforce.

The support-access hop everyone forgets

Manufacturing reviews often obsess over model hosting—and under-specify what happens when a vendor engineer troubleshoots a production issue. Screen sharing, temporary credential elevation, and export of logs for analysis can move sensitive payloads across boundaries you never intended. Your subprocessor map should include support tooling and break-glass behavior, not only the “main” AI service. If support access cannot be described with the same precision as operator access, you do not yet understand your real data path.

Annual renewal questions: any new subprocessors since last year; whether default logging verbosity increased; whether a feature enabled cross-tenant analytics you did not adopt; whether support troubleshooting still matches your access rules.

Hop-by-hop path maps only hold when the vendor names every relay, retention rule, and break point the way you diagrammed the stack. Vector belongs in that diligence pack as industrial AI inside the DBR77 ecosystem: proprietary model trained on factory transformation knowledge, on-premise / private API / isolated deployment options, client data excluded from training, and industrial reasoning instead of generic chat—so subprocessors and routes stay legible under renewal questioning.

Subprocessor diligence is not paperwork theater. It is how you keep factory truth from taking silent detours. Diagram the chain, then test the chain.

Plant checkpoint

Treat “How to Evaluate AI Subprocessors and Data Paths in Manufacturing” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector supports buyers who need transparent boundary discussion for subprocessors, deployment modes, and training posture. Review security or Book a demo.