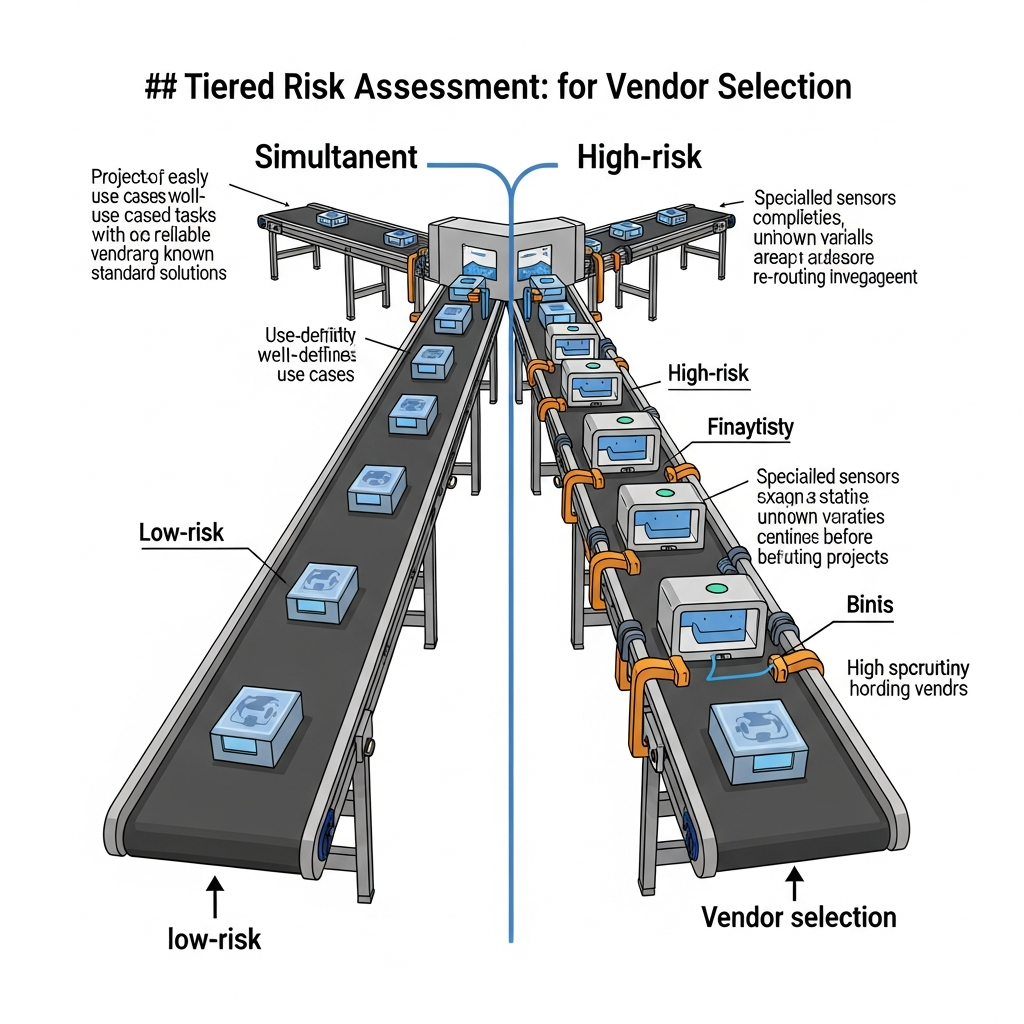

Core problem: teams label every AI idea as urgent, which hides differences in data sensitivity, automation depth, and blast radius if the model is wrong

Main promise: a simple risk tier framework aligns adoption pace with deployment boundaries, approval depth, and integration discipline

Not every AI use case deserves the same runway. Classification is how you keep speed without losing control—because manufacturing adoption fails in two opposite ways: paralysis (“we can’t allow anything”) and recklessness (“it’s just a chatbot”). A tiered model turns opinions into a repeatable sorting rule.

Classify factory AI use cases by combining data sensitivity, decision authority, integration touchpoints, and reversibility. Low-risk tiers can move with lighter gates. High-risk tiers require private or isolated deployment, explicit human approval, full logging, and integration change control before any production traffic. Risk tiers do not replace judgment; they make judgment consistent across shifts, sites, and sponsors.

Framework: four dimensions

Score each proposed use case on data sensitivity: does it touch recipes, yields, costs, customer orders, safety parameters, or only anonymized aggregates? Decision authority: does output inform a human choice, recommend automated actuation, or sit purely in analytics? Integration depth: does it read or write MES, QMS, CMMS, SCADA-adjacent systems, or stay in documents? Reversibility: can you roll back in minutes, or does a wrong output create scrap, downtime, or safety exposure?

Tier model: green, amber, red, black

Green tier typically covers internal documents, no production writes, synthetic or public data: standard IT policy and basic logging may suffice. Amber covers operational analytics with human-only decisions and limited personal data: private API or an approved cloud boundary with retention policy. Red covers production-adjacent reads and quality or planning decisions affecting schedule: on-premise or isolated tenant, subprocessors disclosed, approval workflow. Black covers actuation hooks, safety-critical parameters, or regulated records: hard isolation by site or workflow, no generic public tooling, full audit trail. Black is rare—and when it appears, pause until architecture matches the tier.

Classify before you charter

Write one sentence on the operational outcome; if you cannot state the decision class, you cannot score risk. Inventory data classes touched, including exports, screenshots, and support tickets. Map integrations as read versus write—writes escalate tier almost automatically. Assign tier and publish the bar so procurement and security see the same label.

This framework fails when teams hide shadow paths—operators pasting line data into personal chat tools. Run a quarterly shadow-use scan alongside formal projects.

Green-through-black tiering is useless if the platform class cannot tighten with the tier: identity scope, data paths, logging depth, and promotion rules must move in step. Vector is built for that ladder: proprietary industrial AI with deployment options that scale from controlled patterns to stronger isolation, client data excluded from training the shared model, and industrial reasoning trained on factory transformation knowledge instead of consumer-style chat defaults.

Risk classification is not bureaucracy. It is how manufacturers adopt AI at the right speed for each decision type. Sort use cases before you sort vendors.

Plant checkpoint

Treat “How to Classify Factory Use Cases by AI Risk Before Adoption” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector maps to higher-risk tiers through private API, on-premise, and isolated deployment patterns with industrial reasoning and no client-data training. Explore products using Vector or Review security.