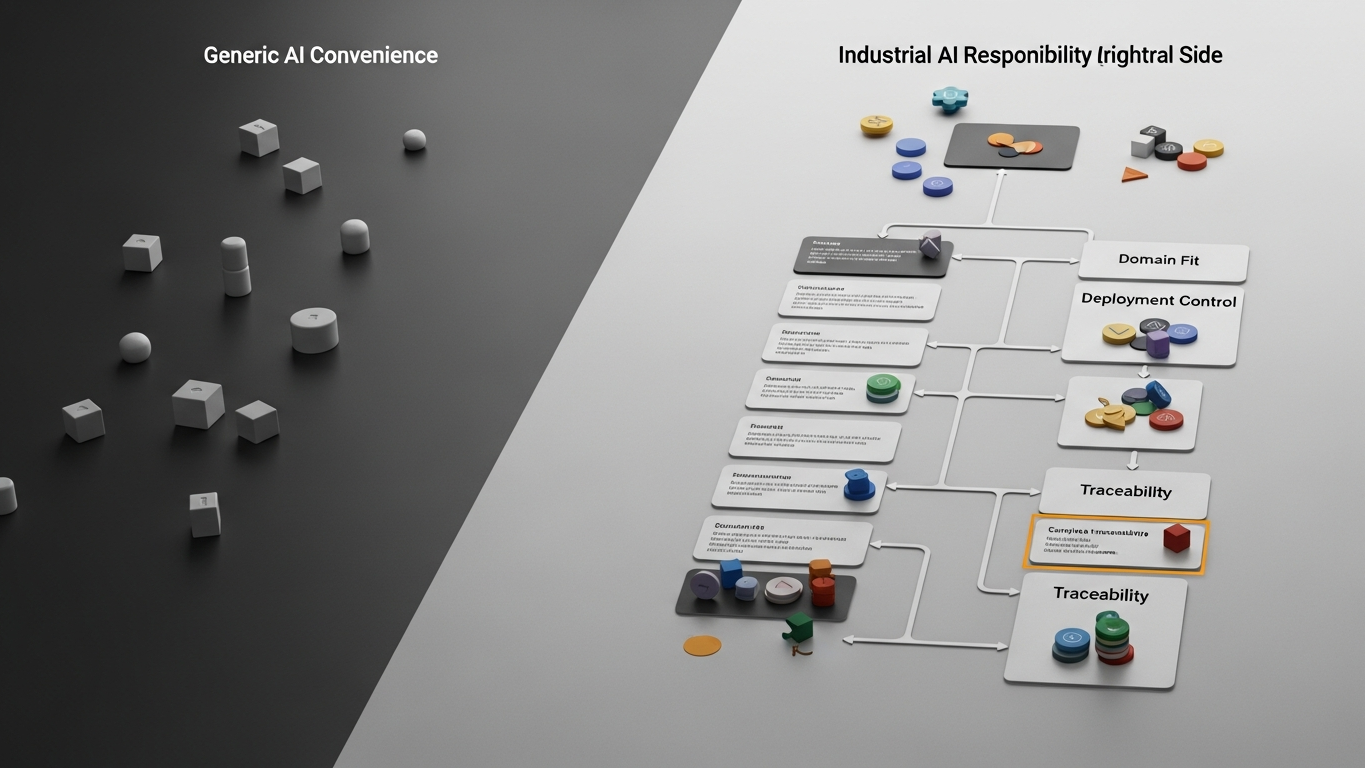

Core problem: teams judge industrial AI against generic LLMs using fluency, benchmark scores, or single-turn answer quality instead of whether the capability can survive real plant accountability

Main promise: the decisive gap is governed industrial fitness and consequence handling, not how accurate or articulate a reply sounds in isolation

Industrial buyers often start with a fair-sounding question: which system produces the better answer on the spot? In a factory context, that question is incomplete. A strong-looking sentence can still be the wrong class of support for work where mistakes propagate into cost, quality, safety, or customer exposure. The comparison that matters is whether the capability is built to operate where decisions are owned, reviewed, and traceable.

A generic large language model is optimized for broad language completion under weak operational accountability. Industrial AI, in the sense serious manufacturers need, is optimized for governed fit: controlled data paths, explicit training and retention boundaries, role-appropriate human review, and outputs that can stand next to MES, ERP, and QMS workflows without breaking the chain of responsibility. The gap is therefore not primarily “smarter text.” It is whether the system can be run, defended, and corrected when something goes wrong on the line or in the audit room.

Why accuracy and fluency mislead the comparison

Accuracy on generic tasks and fluent prose are easy to demo. They do not, by themselves, establish that plant-specific constraints were respected, that missing context was surfaced instead of smoothed over, that a recommendation can be tied to an accountable decision record, or that deployment and data-handling rules match what security and quality teams require. A model can score well on benchmarks and still be a poor fit for industrial use because the failure mode is not “sounds dumb.” The failure mode is “sounds confident while bypassing the controls your environment requires.”

What governed industrial fitness includes

Industrial fitness is the bundle of properties that let AI sit credibly inside high-consequence work. Boundary clarity means knowing where the model runs, what data may enter, what leaves the tenant, and what training or retention is contractually allowed. Workflow alignment means suggestions connect to approvals, tickets, deviations, and systems of record rather than stopping at a chat transcript. Traceability means enough structure to explain what was advised, under what inputs, and who released the next step. Consequence awareness is not a vibe; it is process behavior your review model can catch before errors hit the floor.

This is a different design target than maximizing helpful-sounding continuations for arbitrary prompts.

Consequence changes what “good” means

In office-style tasks, a wrong draft is often cheap to fix. In manufacturing, the same class of error can mean a wrong batch release, a missed hold point, or a customer-facing commitment built on incomplete facts. The organization still owns the outcome. Industrial AI needs to be judged by whether it strengthens defensible decisions, not whether it reduces typing time on low-stakes text.

Plant-side reality: changeover guidance without your boundary

Imagine a team asks for changeover steps for a line that runs several SKUs. A generic LLM can summarize textbook practice or public articles. It does not automatically know your validated sequence, your LOTO points, the QA release that blocks restart, or which document revision is current. A fluent paragraph can still contradict the controlled plan or omit a step your QMS treats as mandatory. Industrial fitness shows up when assistance is constrained to approved sources, flags uncertainty against your master data, and produces a path that quality and operations can sign off—with a record that survives a later trace request.

Plant-side reality: supplier threads and deviation risk

Another common case is summarizing email threads about a supplier issue or a waiver. A generic model can produce a readable narrative. It may not surface that a proposed concession conflicts with a clause in your quality agreement, or that the right next action is a formal deviation rather than an informal reply. The risk is not only wrong wording. It is that the tool accelerates action without embedding the checks your governance expects. Industrial AI fit is whether the workflow makes conflicts visible, routes to the correct role, and preserves enough context for a controlled decision—not whether the summary felt smooth in the moment.

How to keep the comparison honest

When you evaluate options, separate three lenses that are often blurred together: language capability (breadth and polish of generation), industrial fitness (governance, deployment, traceability, and review behavior), and buying category (whether you are comparing full industrial layers or thin convenience wrappers on general models). The first lens dominates vendor demos. The second lens determines whether the tool belongs beside production and quality decisions. The third lens belongs in a dedicated shortlist review so category confusion does not masquerade as model quality.

DBR77 Vector is positioned around governed industrial intelligence: deployment options that respect sovereignty, client data excluded from model training, proprietary industrial reasoning grounded in transformation practice, and human approval where stakes require it. That positioning targets fitness and consequence handling as the product promise, not generic conversational prestige.

The difference between a generic LLM and industrial AI is larger than accuracy or fluency. It is the difference between open-ended language assistance and a controlled decision support layer that your organization can run, audit, and own when outcomes matter.

DBR77 Vector gives manufacturers a more controlled industrial AI path than generic copilots by combining private deployment, domain fit, and human approval. Explore products using Vector or Review security.