Core problem: many AI workflows are adopted without enough visibility into how outputs were produced, reviewed, and used in consequential industrial decisions

Main promise: manufacturers need traceability because decision support without auditability becomes difficult to trust, defend, and improve

Many AI systems look useful in the moment. The harder question appears later, often under pressure: can you explain how the output was produced, reviewed, and used? In manufacturing, that question matters a lot—because “useful” is not the same as “defensible,” and defensibility is what turns assistance into infrastructure.

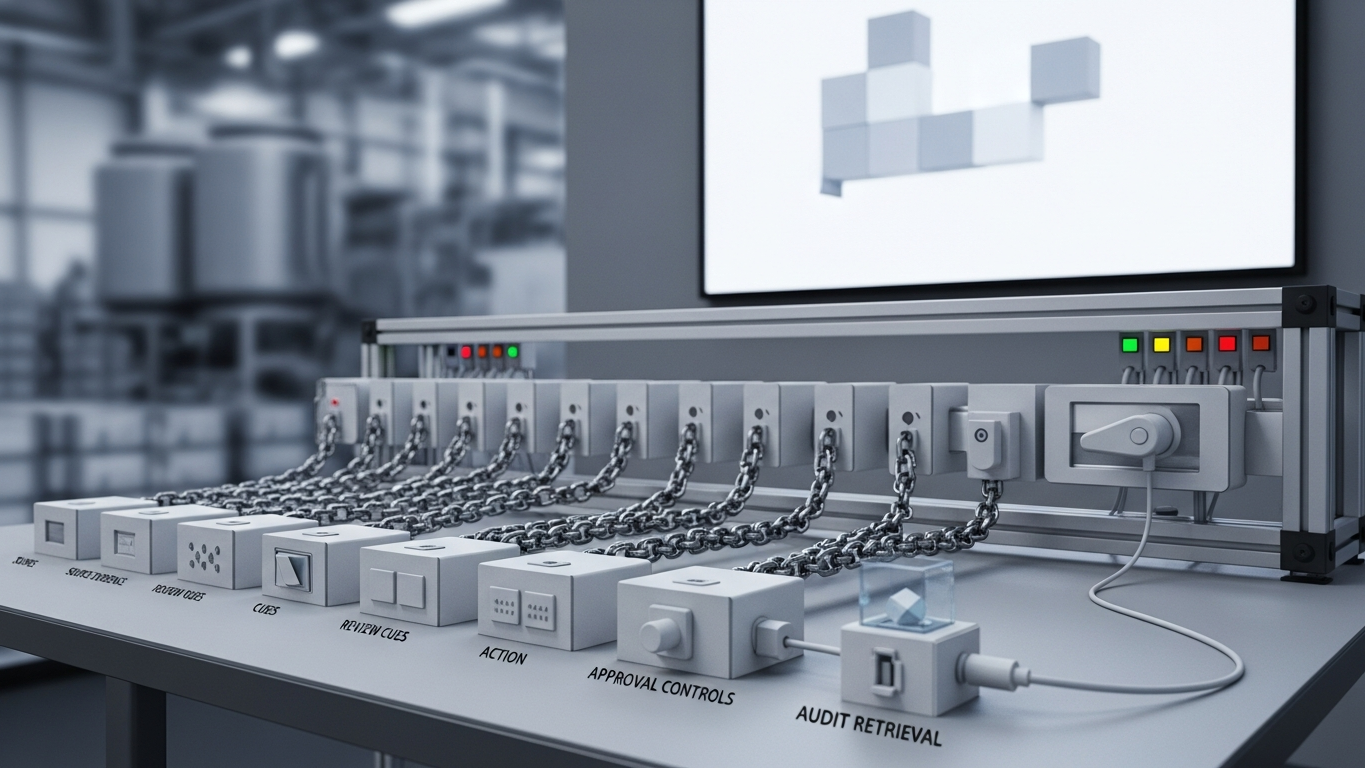

Some teams treat traceability like an optional technical detail. It is not. In industrial environments, traceability helps answer what input shaped the output, what context was used, who reviewed the recommendation, what action followed, and what happened after the decision. That is decision infrastructure, not admin overhead. Without it, AI becomes a parallel channel that competes with your systems of record instead of strengthening them.

Why industrial decisions need this standard

When AI touches decisions around production, downtime, CAPEX, or process changes, the organization needs a stronger record of how judgment was formed. Without that, teams may struggle to defend decisions under customer scrutiny, review mistakes without guessing, improve workflows with evidence rather than anecdotes, and maintain accountability when roles change or shifts rotate.

Traceability protects trust

If an AI recommendation cannot be reconstructed, trust weakens. Teams may still use the system when it feels convenient, but they will hesitate when consequence rises. That limits adoption exactly where better AI could be most valuable—because the tool is treated as a shortcut for low-stakes work rather than a partner for high-stakes judgment.

Governance depends on traceability

Strong governance is difficult when the system cannot show where the insight came from, who saw it, who approved it, and how it influenced the final action. Traceability is what makes review real instead of symbolic. It is also what makes improvement possible: you cannot fix a failure mode you cannot see.

Industrial buyers should expect AI systems to support input visibility, output history, approval trace, access control, and reviewability after the fact. That is a practical auditability standard—one that should feel familiar to anyone who has lived through a serious quality investigation.

Audit drill: pick one consequential workflow and ask your team to reconstruct it from logs alone. If the story depends on memory or screenshots, traceability is not yet real.

DBR77 Vector is positioned for industrial settings where trust depends on stronger governance and review: private deployment options, no training on client data, industrial reasoning, and human approval over critical judgment. That makes traceability part of the operating logic instead of an afterthought.

If you cannot audit how AI supported an industrial decision, your governance is weaker than it looks. In manufacturing, traceability is what turns AI usefulness into defensible decision support.

Plant checkpoint

Treat “Can You Audit Your AI? Why Traceability Matters in Industrial Decisions” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion. Finally, treat ambiguity as debt: every unanswered question about data paths, training defaults, or approval routing is something your future self will pay for under time pressure—usually during an audit, an incident, or a rushed rollout.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector supports stronger industrial AI traceability through governed deployment, reviewability, and human approval. Review governance readiness or Review security.