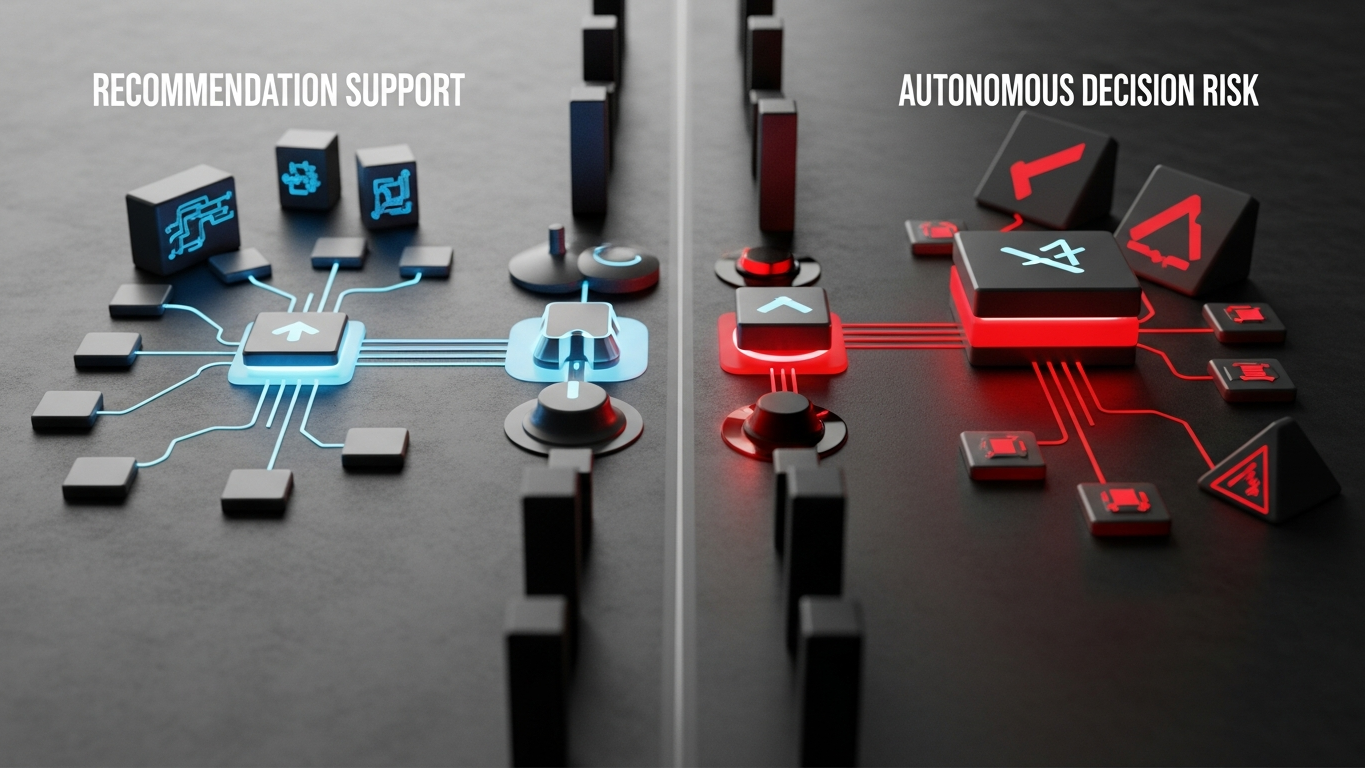

Core problem: many AI narratives overpromise autonomy in environments where decision consequence still requires human judgment and accountability

Main promise: manufacturers should value AI as decision support first, because unsupported autonomy can increase risk faster than it creates value

More autonomy is not a universal upgrade in factories. It is a scaling knob that must track consequence. The useful industrial pattern is recommendation with accountable review. The dangerous pattern is action or irreversible commitment without enough human judgment in the loop.

Treat AI as decision support when wrong outputs can change production, quality, spend, or customer commitments. Reserve unattended automation for narrow bands where inputs are well bounded, reversibility is high, and you have engineering controls comparable to traditional automation. When vendors blur “recommends” and “decides,” push for explicit boundaries in workflow design—not in marketing language. How approval is routed by role, data class, and system is a design topic on its own; this piece stays on the autonomy boundary principle.

Why consequence breaks the demo story

Demos reward fluent completion. Plants reward stable throughput, defect control, and defensible choices under variability. A wrong recommendation that a human catches is an annoyance. A wrong recommendation that becomes a work order revision, a material release, or a CAPEX narrative before review is a different class of failure—because it imprints on systems, schedules, and reputations before anyone realizes the model was operating outside its real competence.

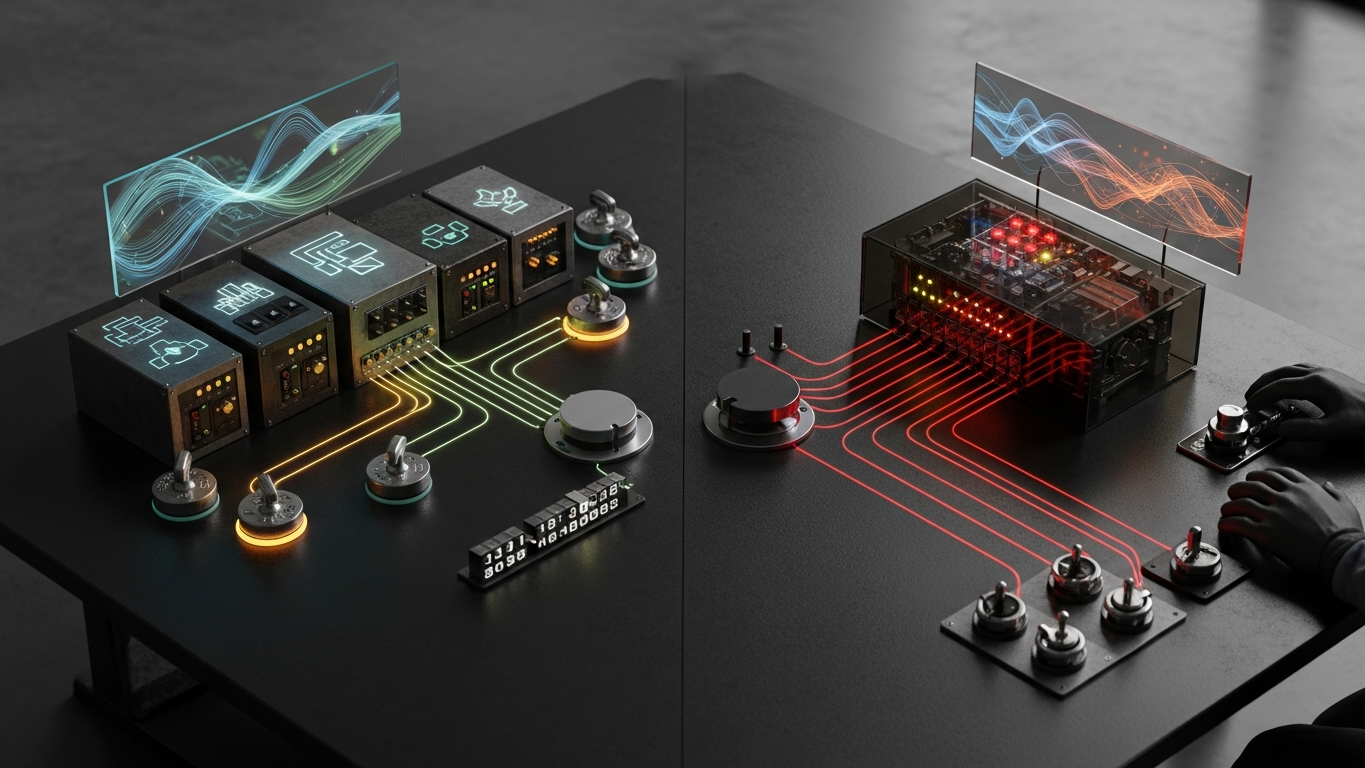

What useful recommendation looks like

Strong industrial assistance tends to surface options and trade-offs with explicit assumptions, tie suggestions to the inputs provided so teams can sanity-check, speed structuring and comparison without claiming final judgment, and fail visibly when context is missing instead of filling gaps with confident prose. That pattern raises decision quality without pretending the plant is a lab where mistakes are cheap.

Where unattended automation becomes hazardous

Extra caution is warranted when outputs feed MES, ERP, or QMS records with limited undo; when the model infers financial or supplier position from partial data; when safety or regulatory language appears in generated procedures; or when the workflow skips the engineer or manager who would normally own the call. These are not arguments against AI. They are arguments against skipping the accountability chain your organization already relies on to stay safe and consistent.

Autonomy should be proportional to risk

Think in tiers: read-only analysis and drafting for internal review; recommendations that require a named approver before execution; closed-loop automation only inside narrow technical guardrails you already use for conventional software. Skipping tiers because the model feels capable is how organizations discover downside in production instead of in a pilot memo.

DBR77 Vector is positioned for governed industrial intelligence: proprietary reasoning aimed at transformation and operations work, deployment patterns that respect data sovereignty, no training on client data, and human judgment retained where outputs carry real consequence. The product promise is strength with proportionality, not maximum hands-off automation by default.

AI that recommends can be deeply valuable on the shop floor and in the engineering office. AI that decides alone, without aligned controls, outruns accountability. In manufacturing, that asymmetry is the core risk to manage.

Plant checkpoint

Treat “AI That Recommends Is Useful. AI That Decides Alone Is Dangerous” as a decision tool, not background reading. Before the next steering meeting, ask for one artifact that proves your posture—an architecture diagram, a training-policy excerpt, a log sample, a signed workflow classification, or a promotion record. If the room can only tell stories, you are still in pilot clothing. Manufacturing AI matures when evidence becomes routine: the same discipline you already expect before a line release, a supplier change, or a major IT cutover. That is the shift from excitement to infrastructure—and it is what keeps programs coherent across audits, turnover, and multi-site expansion.

If leadership wants one crisp decision habit, make it this: name what must be true before usage expands, then review whether it is true on a fixed cadence. That is how governance stops being a narrative comfort and becomes an operating metric your plants can execute.

DBR77 Vector helps manufacturers use industrial AI as governed decision support rather than reckless autonomy. Review governance readiness or Review security.